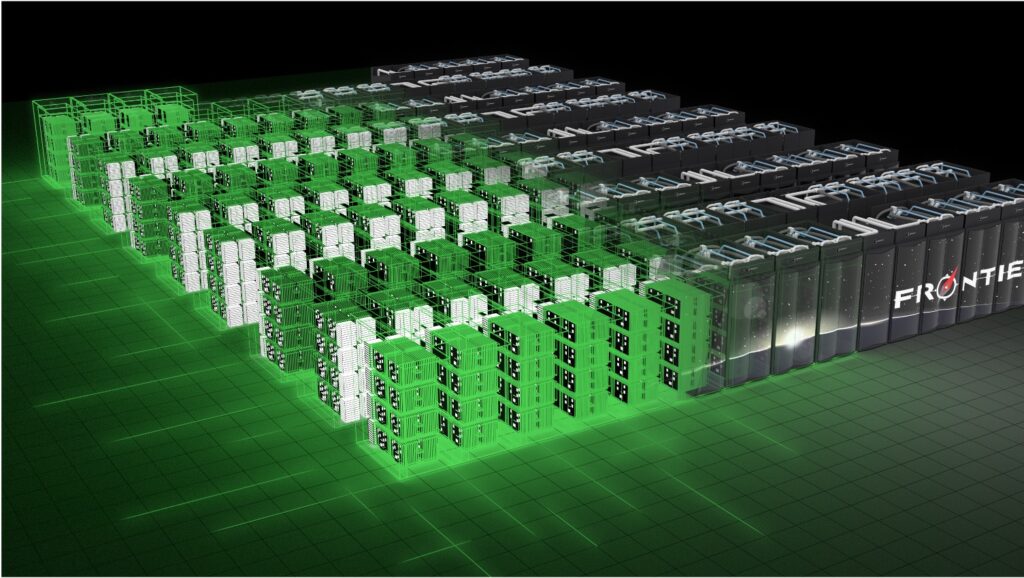

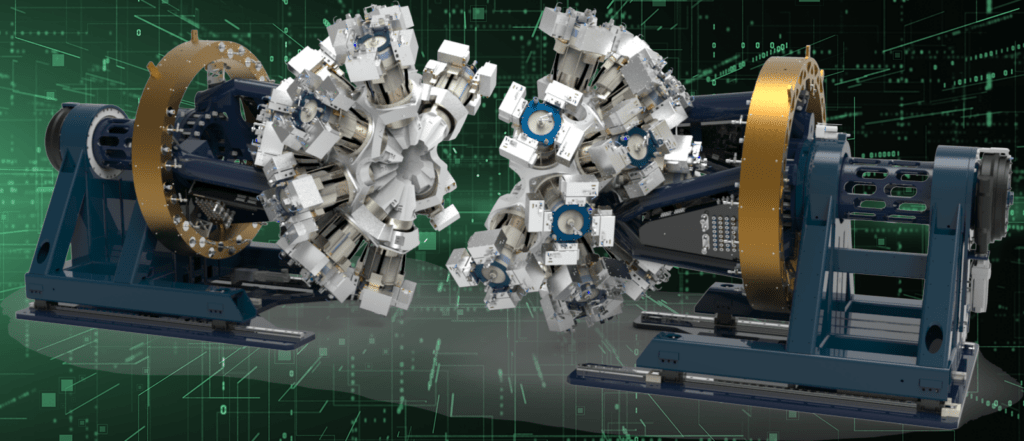

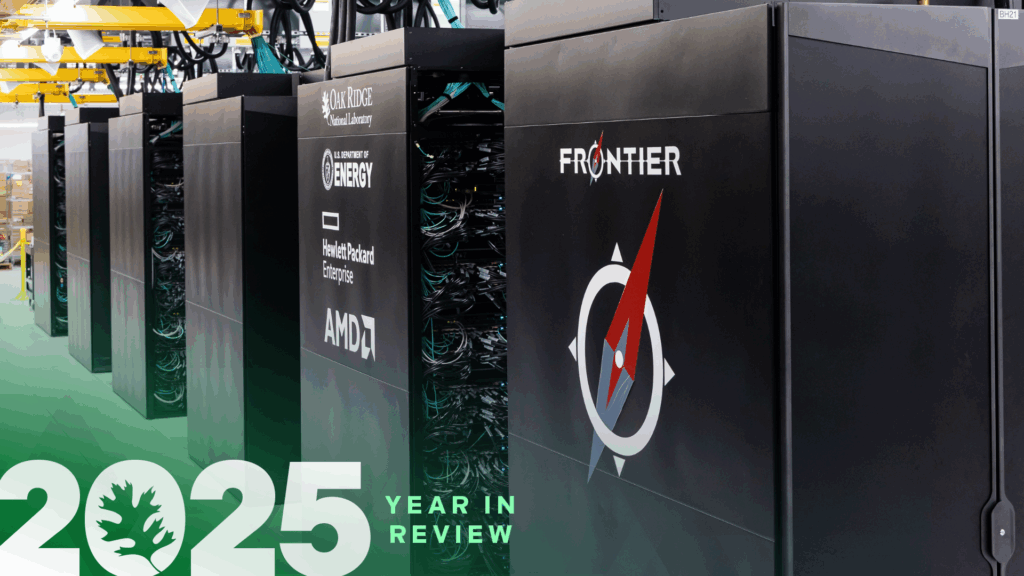

In 2025, high-performance computing and rapid advances in artificial intelligence pushed the boundaries of scientific discovery, accelerating progress across a wide spectrum of disciplines. Deep collaborations among leading academic, industrial, and government partners fueled major breakthroughs in areas such as simulating cellular machinery, accelerating AI, integrating quantum and classical computing…

Angela GosnellDecember 22, 2025