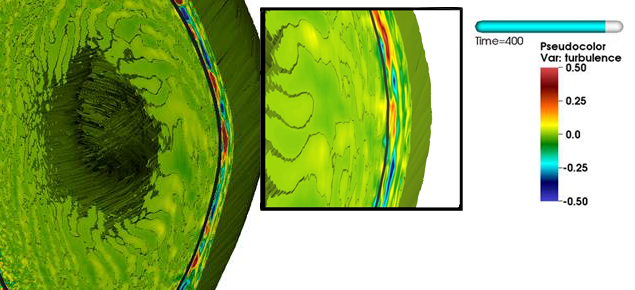

C.S. Chang of Princeton Plasma Physics Laboratory (PPPL) is researching “blobby” turbulence, which greatly affects fusion reactor performance. This visualization shows the turbulence front from the plasma edge being spread inward under the central heat source. The spatial turbulence amplitude distribution is just enough to produce the outward heat transport to expel the centrally deposited heat to the edge.

Credit: Dave Pugmire, ORNL.

INCITE campaign successful during program year in which Titan became GPU-accelerated.

Some of the most ambitious users of Oak Ridge National Laboratory’s (ORNL’s) Titan supercomputer had a great year in 2013, pushing the envelope in fields ranging from nuclear fusion to astrophysics.

These were participants in the Innovative and Novel Computational Impact on Theory and Experiment (INCITE) program, world-class projects able to solve challenging problems using Titan’s 18,688 compute nodes. The Oak Ridge Leadership Computing Facility (OLCF) allocated 1.94 billion processor hours to 32 computational research projects in 2013

The 2013 program took place during a time of major upgrades to Titan. By adding a graphics processing unit (GPU) accelerator to the 16-core central processing unit (CPU) on each node, the OLCF substantially increased Titan’s computing capability, enabling INCITE researchers to reach unprecedented science achievements.

Even more, as a result of a consistent, concerted effort to keep the lines of communication open between the OLCF and users, the center has achieved increasing satisfaction among INCITE users over the past seven years. The satisfaction ratings in 2013 were the highest ever. Eighty-nine percent of INCITE respondents rated their overall opinion of OLCF user support services as “satisfied” or “very satisfied.”

INCITE was created to accelerate computational advancements in science and engineering research. The program focuses on projects that could be completed only on systems such as Titan, the United States’ most powerful supercomputer. This competitive program is open to research teams from industry, academia, national laboratories, and other federal agencies.

Below are a few of the remarkable achievements of INCITE researchers using Titan in 2013.

The bleeding “edge” of fusion research

With the help of Titan, C. S. Chang of Princeton Plasma Physics Laboratory is shedding light on a long known and little understood phenomenon known as “blobby” turbulence, which greatly affects fusion reactor performance.

Blobby turbulence happens when formations of high-density clumps flow together and move large amounts of edge plasma around, affecting edge and core performance. Understanding these blobs and how they affect performance is vital to progress in fusion energy research.

“What happens at the edge is what determines the steady fusion performance at the core,” said Chang.

Using more than 102 million Titan core hours, Chang was able to run a full-scale production simulation of tokamak plasma. He and his team optimized the simulation code to run four times as fast on the GPU-enhanced Titan as it had run previously on a similar CPU-only system. The code, XGC1, exhibited efficient weak and strong scalability, enabling it to use all of Titan’s 18,688 nodes.

For more information on Chang’s 2013 INCITE project, visit https://www.olcf.ornl.gov/2014/02/14/the-bleeding-edge-of-fusion-research/

Investigating Earth’s inner workings

The Earth’s interior is responsible for earthquakes, volcanic activity, and other geological goings-on, yet we extrapolate most of our knowledge about subsurface activity from surface observations. Jeroen Tromp of Princeton University used Titan to model the Earth’s mantle to better understand the tectonic processes that cause catastrophic damage across the world. Their project’s goal is global seismic tomography, using the waves generated by earthquakes to create an image of the mantle.

The mountains of raw data created by these simulations pose a challenge even for Titan’s file system. With help from the OLCF Scientific Computing Group, Tromp was able to use the Adaptable I/O System (ADIOS) software. A 2013 R&D 1g00 Award winner, ADIOS (https://www.olcf.ornl.gov/center-projects/adios/) is a parallel data management framework that can read, write, process, and visualize data outside the code running on Titan. Integrating ADIOS into Tromp’s seismology simulations greatly improved the performance of the data workflow to model the Earth’s mantle.

Using almost 52 million hours on Titan, Tromp and his team were able to map the Earth under Southern California to a depth of 40 kilometers and beneath Europe to a depth of 700 kilometers. They used wave frequencies with periods of 9 seconds, meaning it takes 9 seconds for waves to move up and down. The team hopes it can improve its modeling to map a greater area of the mantle at higher frequency, using 1 second waves to increase the precision of its simulations.

Witnessing our own cosmic dawn

According to cosmological theory, the universe was a fully ionized mass of electrically charged particles for thousands of years after the Big Bang. After about 400,000 years, protons and electrons combined into a transparent soup of un-ionized, neutral gas particles, consisting mainly of hydrogen. About 150 million years later, the first stars and galaxies began to form as hydrogen clouds condensed enough to support nuclear fusion.

The radiation created from the birth, life, and death of these stars ionized, or ripped apart, the remaining hydrogen molecules in space, heating them to extreme temperatures. Patchworks of ionized zones spread their energy outward, until the entire universe was “reionized.” This period is called the Epoch of Reionization (EOR), and it is the focus of research conducted by Paul Shapiro and his international team of collaborators.

Shapiro, a theoretical astrophysicist from the University of Texas–Austin, has teamed up with Kyungjin Ahn of Chosun University in South Korea, Ilian Iliev of the University of Sussex in the United Kingdom, and Romain Teyssier of the University of Zurich in Switzerland to study the EOR. The team has narrowed its focus to the Local Group, or those galaxies within 150 light years of our own Milky Way galaxy. The standard theory of cosmology overpredicts the number of dwarf galaxies in the Local Group, so Shapiro and his colleagues are looking to Titan for answers.

Modeling a chunk of the universe large enough to be representative is no small feat; thankfully, Titan is up to the task. Using a GPU-accelerated code called RAMSES-CUDATON, Shapiro and his team used 81.3 million core hours on Titan to run the largest-ever simulation of the universe, utilizing three complex phenomena—radiation, hydrodynamics, and gravity—to observe the formation or suppression of stars in the Local Group.

The code was optimized to fully utilize Titan’s hybrid CPU–GPU nodes, which gave it an eightfold increase in power compared with CPU-only operation. RAMSES-CUDATON was scaled to run on about 44 percent of Titan’s nodes at one time, but it has the potential to scale even further.

The team hopes its data will lead to new discoveries about the theory of reionization and its feedback effect on galaxy and star formation in the universe at large.

The future of innovation

In 2014, the INCITE program has allocated 2.25 billion core hours on Titan to 30 projects. The 2015 INCITE program will be accepting applications starting Wednesday, April 16th, 2014.

With an increase in core hour allocations in 2014, Titan will continue to be a pivotal resource to achieve groundbreaking science in the years to come.

More Information:

For a list of 2013 OLCF INCITE projects, visit https://www.olcf.ornl.gov/leadership-science/2013-incite-projects/

For the complete list of the 2013 INCITE projects with a description for each, visit https://www.doeleadershipcomputing.org/awards/2013INCITEFactSheets.pdf

For a list of 2014 OLCF INCITE projects, visit https://www.olcf.ornl.gov/leadership-science/2014-incite-projects/ —Dixie Daniels