Researchers used the world’s fastest supercomputer for open science to train an artificial intelligence model that captures magnetic turbulence within a plasma in unprecedented detail.

Results from the model, trained on the Frontier supercomputer at the Department of Energy’s Oak Ridge National Laboratory, could support research ranging from modeling a supernova to building the next generation of nuclear fusion reactors.

“This kind of capability has long been the dream of astrophysicists and many other scientists,” said Eliu Huerta, a computational scientist at Argonne National Laboratory who oversaw the study led by his graduate student, Semih Kacmaz. “It’s the first time this level of insight via AI has been achieved for systems of this complexity.”

Scientists used the Frontier supercomputer, the world’s first exascale system, to train an artificial intelligence model that captures magnetic turbulence within a plasma in unprecedented detail. Credit: Carlos Jones/ORNL, U.S. Dept. of Energy

A stormy question

Turbulence — the unstable flow of heat and mass — occurs everywhere, from Earth’s atmosphere to the coupling between the electrically charged fluids, or plasmas, and the magnetic fields that surround stars. The churning and swirling of those plasmas creates magnetohydrodynamic (MHD) turbulence, a kind of cosmic storm that affects everything from Earth’s magnetic field to the shaping of stars and galaxies.

Improved understanding of MHD turbulence could allow scientists to explore more astrophysical scenarios, design better experiments and improve predictions of cosmic events.

Scientists have typically tried to model these turbulent patterns using approximations such as the Reynolds-Averaged Navier Stokes (RANS) approach, solving a set of time-averaged equations that offer a kind of shortcut to predicting turbulent flow. But these approximations too often omit key details and fail to account for all relevant physics.

“The more chaotic the system, the harder to simulate it,” Huerta said. “Traditional AI models struggle to reproduce these patterns for the same reason: because these interactions are so complex and computationally demanding to reproduce, the models smooth out the fine details that define turbulence and we lose the insights we seek.”

Researchers used ORNL’s Frontier supercomputer to train an AI model that captures the details of magnetic turbulence within a plasma. Credit: Semih Kacmaz

A marriage of models

To succeed where other approaches failed, Kacmaz settled on a two-stage strategy for modeling the MHD patterns. The first stage called for a physics-informed neural operator — a specialized AI architecture that learns the mapping, or relationships, between various sets of functions that can be used to express and solve the governing equations for a physical system. Examples of this type of AI include the algorithms that learn to recognize atmospheric patterns for weather forecasting.

The second stage used a score-based diffusion model — a generative AI framework that synthesizes complex data distributions by learning to reverse the gradual addition of noise to data. Examples of this type of model include the algorithms that refine satellite photos by learning to recognize and remove haze or cloud cover.

Training those models required the computational power to generate thousands of detailed plasma simulations across turbulence levels from the mildest to the most extreme. The team received an allocation of computing time on Frontier, the exascale flagship supercomputer at ORNL’s Oak Ridge Leadership Computing Facility, capable of peak speeds of 2 exaflops, or 2 quintillion calculations per second.

Frontier’s speeds enabled the team to train the neural operators on the necessary physics to capture the overall details of MHD turbulence and to train the diffusion model on finer details, such as the smaller eddies and flows within the larger turbulence patterns. Together the models acted as a kind of AI tag team for simulating the turbulence.

“Frontier was a lifesaver for us,” Kacmaz said. “We used Frontier to generate high-fidelity datasets to train our diffusion model and to train our physics-informed neural operators. Those steps required tremendous amounts of computations that had been a bottleneck for us, and Frontier made them practical.”

The two-step solution

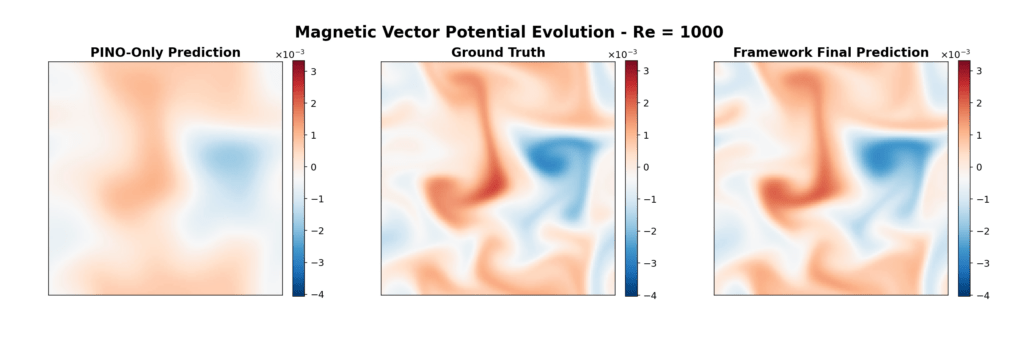

Spreading the workload across the two stages allowed the neural operator to accurately resolve the bulk evolution of the plasma and establish the mean flow, capturing the largest features in the turbulent flow. The diffusion model worked to reproduce the cascade of smaller patterns in the flow by regenerating the smallest and fastest of the fluctuations that define the system’s complexity.

“The neural operators give us the large-scale physics quickly, but turbulence lives in the tiny details,” Kacmaz said. “By training the diffusion model to learn only what the neural operators cannot represent, we were able to reconstruct the missing magnetic and velocity structures across all scales.”

The resulting framework delivers plasma turbulence predictions in seconds and reduces prediction errors by more than half when compared with earlier approaches, even when modeling extreme turbulence.

“This is the first time AI has been able to faithfully model magnetized turbulence at such extreme conditions,” Huerta said. “By coupling the physics-informed neural operators with generative diffusion, we created a framework that respects the equations while recovering the full complexity of the plasma.”

The team hopes to expand the model to simulate even more complex systems, including full 3D plasma resolution and astrophysical settings, and for applications such as modeling plasma turbulence for nuclear fusion reactors.

This research was supported by the DOE Office of Science’s Advanced Scientific Computing Research program and by the National Science Foundation. The OLCF is a DOE Office of Science user facility at ORNL.

Related publication: Semih Kacmaz, Eliu Huerta, and Roland Haas. “Resolving turbulent magnetohydrodynamics: a hybrid operator-diffusion framework.” Machine Learning: Science and Technology 6.3 (2025): 035057. DOI: https://doi.org/10.1088/2632-2153/ae054c

UT-Battelle manages ORNL for DOE’s Office of Science, the single largest supporter of basic research in the physical sciences in the United States. DOE’s Office of Science is working to address some of the most pressing challenges of our time. For more information, visit https://energy.gov/science.