Simulations of freezing water can help engineers design better blades

The amount of global electricity supplied by wind, the world’s fastest growing energy source, is expected to increase from 2.5 to as much as 12 percent by 2020. Under ideal conditions, wind farms can be three times more efficient than coal plants, but going wherever the wind blows is not always so easy.

“Many of the regions in the world that could benefit from wind energy are actually in cold climates,” said Masako Yamada, a General Electric (GE) Global Research computational scientist. “If you have ice on a turbine, it reduces the efficiency of energy generation, and depending on the severity, you may have to shut down the turbine completely.”

Rather than risk investing in wind turbines that might freeze during icy, cold seasons at high latitudes, such as northern Europe and Canada, or at high altitudes, like mountainous areas of the US and China, these regions simply don’t adopt wind power—despite powerful gusts of renewable energy biting at their fingertips.

For a company like GE—the Fortune 500 multinational corporation that runs the gamut of industrial, energy, aviation, and consumer products—wind turbines represent a lot of potential in a market that could attract almost 100 billion dollars of investments by 2017.

Many turbines already located in colder regions rely on small heaters in the blades to melt ice, but these heaters drain 3–5% of the energy the turbine is producing. To recruit more cold regions to wind energy, GE would like to reduce this draw on the turbine’s energy.

GE researchers Yamada, principal investigator, Azar Alizadeh, co-principal investigator, and Brandon Moore, formerly of GE and now at Sandia National Laboratories, want to better understand the underlying physical changes during ice formation on various icephobic surfaces using high-performance computer modeling. Through a Department of Energy (DOE) ASCR Leadership Computing Challenge (ALCC) award, GE is using the hybrid CPU/GPU Cray XK7 Titan supercomputer managed by the Oak Ridge Leadership Computing Facility (OLCF) at Oak Ridge National Laboratory to simulate hundreds of millions of water molecules freezing in slow motion.

With insight into the inner workings of ice, Yamada can help steer GE’s experimental icephobic surfaces research.

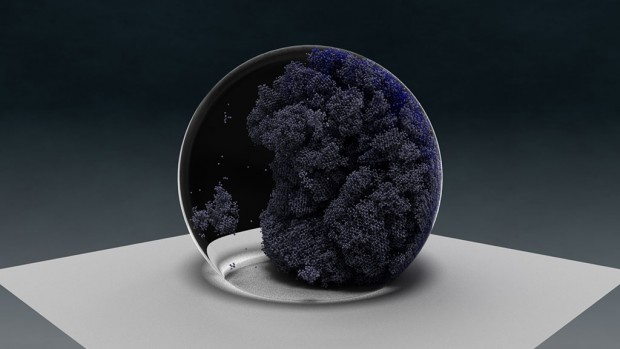

GE simulated hundreds of water droplets, each including one million molecules. Simulations accelerated at least 200 times over pre-GPU estimates permitting GE to study the nucleation of individual ice molecules.Vizualization by M. Matheson (ORNL)

GE’s wind workshop

Since 2005, the GE anti-ice experimental research group has developed and tested many surfaces under freezing conditions. As it turns out, energy-draining technologies that apply heat or mechanical force are not the only way to curtail ice accumulation. Engineered surfaces that require no energy input can slow down the freezing process, lower the freezing temperature of water, reduce the adhesiveness of ice, or repel water before it has the chance to settle and freeze.

“There are polar-opposite opinions among experimentalists on what works best to mitigate the formation of ice,” Yamada said. “So we need to sort through the potential solutions.”

For example, none of these surfaces behave the same at all temperatures, humidity levels, or when subjected to different volumes and velocity of precipitation.

“Freezing is a really complicated process,” Yamada said. “What we found experimentally is that surfaces that are great icephobic surfaces at one temperature may not be as effective at a different temperature.”

Although experimental research has yielded many gains for GE (the only US-based wind turbine manufacturer among the top six), researchers realized something is happening on the atomic level that affects how ice forms on various surfaces and temperatures.

“If we can know how the freezing process is initiated on the atomic level, then we might be able to avoid it,” Yamada said.

Breaking the ice

In the best experimental setup, researchers cannot observe individual water molecules freezing. By the time freezing is detected by way of a visual or thermal signature, the interesting stuff has already happened.

“In an experimental setup, they can only really figure out the general region where ice starts freezing,” Yamada said.

To pinpoint the time and place water molecules nucleate (or bud into ice), GE needed to enhance two things through simulation: the size of the system (individual molecules rather than droplets) and the increments of time at which researchers could observe those water molecules transform into ice. These simulations would constitute an unprecedented study due to the computational challenge involved.

Because molecular dynamics is a computational technique that takes a long time, most freezing simulations are still in the 1,000-molecule range.

“We needed a million molecules per droplet to even be able to start guiding the experimentalists,” Yamada said.

While many high-performance computing systems could have crunched the numbers for this “champion droplet,” including GE’s in-house Cray, Yamada had an additional challenge. She would have to model much more than one drop of ice.

“One droplet is not meaningful because we wanted to come up with a landscape correlating freezing and the surface of the blade,” Yamada said. “So we needed hundreds of droplets.”

The millions of molecules for each droplet would also need to be simulated in femtosecond snapshots (quadrillionths of a second) in order to accurately calculate their continuous, curvy dynamics.

Hundreds of droplets at a million molecules simulated over so many time steps would demand a petaflop supercomputer—a machine that can calculate more than 1,000 trillion calculations per second. They would need the best—at the very minimum.

Titan weathers the storm

GE looked to DOE for help. Through the ALCC award, they received 40 million hours, first on the OLCF’s Cray XT5 Jaguar, then Titan—Jaguar’s significantly more powerful successor that went online in fall 2012.

Titan is the nation’s most powerful supercomputer, with a peak performance of 27 petaflops. It is also one of the few petaflop systems utilizing both traditional CPUs and graphics processing units (GPUs). The GPUs rip through repetitive calculations. For modeling a system of similar calculations (droplets of water molecules requiring 450 million calculations each), GPUs can provide that extra push over the computational cliff.

When GE started working on Jaguar, Yamada set out to simulate ice formation on three different surfaces at three different temperatures, which was already highly ambitious for a molecular dynamics study of this magnitude. When Titan arrived, Yamada’s plans changed.

GE’s simulations increased to six different surfaces and five different temperatures, totaling just under 350 simulations—eight times the original estimate.

“We’re running huge simulations. We’re running many simulations. We’re running very long simulations,” Yamada said. “Only a resource like Titan can handle the type of computation I have to do.”

Yamada’s team partnered with OLCF’s computational scientist Mike Brown to make the most of Titan’s GPUs by repurposing the LAMMPS molecular dynamics application, which was originally written just for CPUs. As part of OLCF’s Center for Accelerated Application Readiness, Brown worked with other OLCF staff, as well as Titan’s Cray and NVIDIA developers, to make LAMMPS (and other popular applications) highly efficient on Titan.

For GE’s study Yamada and Brown took LAMMPS one step farther and incorporated a new model for simulating water molecules, known as the mW water potential.

“Masako’s using a unique potential for water that calculates in triplets,” Brown said. “There’s a lot of concurrency in this model, so we can take advantage of Titan’s GPUs.”

With the ability to crunch the numbers so fast, Yamada and Brown cast a wide net, simulating a landscape of water droplets across each of the six surfaces—poised to catch that first crystal of ice.

“When we saw droplets starting to freeze, we focused more around that region and temperature range,” Yamada said.

Using visualizations developed by OLCF staff, Yamada could study the nucleation (or initial budding) of ice molecules among the million water molecules.

“The visualizations helped us realize where the nucleus starts,” Yamada said. “Are there multiple independent nuclei that fuse together or are there finger-like regions that spread out, for example.”

Choosing a new water model that is well suited for GPUs, skillfully recasting the model and then running it on Titan’s powerful hybrid architecture accelerated Yamada’s freezing simulations at least 200 times. The numbers so exceeded the original scope of the project, Brown and Yamada co-authored a high-performance computing article published by Computer Physics Communications.

Cutting to the chase

What Yamada is able to take back to her experimental colleagues is a suite of conclusions about which surfaces are favorable under certain temperatures.

“We’ve come to understand there’s no one-size-fits-all solution,” Yamada said. “I think I can go back and say that for temperatures between minus 10 and minus 20, this is the type of solution you want. For minus 30 to minus 40, try this type of solution. So now we can figure out the knobs that you have to turn to make things go this way or that way.”

GE’s work on Titan also led to a new ALCC award for the anti-ice computational team. For future simulations, Yamada plans to include another variable—thermal conductivity—and to modify a computational method known as the Parallel Replica Method to further accelerate simulations.

By accumulating a lot of virtual ice on Titan, Yamada said these detailed simulations will help experimental researchers reduce the number of time-consuming and costly physical experiments in their goal to open cold climates to renewable power.

GE’s icephobic materials research could also guide other anti-ice applications. For example, ice accumulation can clog oil and gas pipelines, which are essential to energy industries in Alaska and Canada.

“Our work on Titan is accelerating and expanding our understanding about ice formation,” Yamada said. “The more we know about ice formation, the better we can design an array of GE products to operate more efficiently in cold conditions.” —Katie Elyce Jones

Related Publications

Brown, W.M., Yamada, M. “Implementing Molecular Dynamics on Hybrid High Performance Computers – Three-Body Potentials,” Computer Physics Communications. 2013. In press. doi: 10.1016/j.bbr.2011.03.031