The world’s most powerful supercomputer has raised the bar for calculating the number of atoms in a molecular dynamics simulation 1,000 times greater in size and speed than any previous simulation of its kind.

The simulation was conducted by a team of researchers at the University of Melbourne, the Department of Energy’s Oak Ridge National Laboratory, AMD, and QDX who used the Frontier supercomputer to calculate the dynamics of more than 2 million electrons.

Moreover, it is the first time-resolved quantum chemistry simulation to exceed an exaflop — over a quintillion, or a billion-billion calculations per second — using double-precision arithmetic. The 16 decimal places provided by double precision is computationally demanding, but the additional precision is required for many scientific problems. In addition to setting a new benchmark, the achievement also provides a blueprint for enhancing algorithms to tackle larger, more complex scientific problems using leadership-class exascale supercomputers.

“This is a game changer for many areas of science, but especially for drug discovery using high-accuracy quantum mechanics,” said Giuseppe Barca, an associate professor and programmer at the University of Melbourne. “Historically, researchers have hit a wall trying to simulate the physics of molecular systems with highly accurate models because there just wasn’t enough computing power. So, they have been confined to only simulating small molecules, but many of the interesting problems that we want to solve involve large models.”

For drug design, for example, Barca explained that large proteins are often responsible for causing disease. Those proteins can be as large as 10,000 atoms — too many to simulate without using highly approximate models running at a reduced precision. Reduced-precision models are more computationally efficient but produce less accurate results.

Modern drug design requires the ability to simultaneously model large proteins and match them with libraries of small molecules intended to bind to the large protein and block it from functioning. Most molecular simulations use simplified models to represent the forces between atoms. However, these force field approximations don’t account for certain essential quantum mechanical phenomena such as bond breaking and formation — both important to chemical reactions — and other complicated interactions.

To overcome these limitations, Barca and his team developed EXESS, or the Extreme-scale Electronic Structure System. Instead of going through the painstaking process of scaling up legacy codes that were written for previous generation petascale machines, Barca decided to write a new code specifically designed for exascale systems like Frontier with hybrid architectures — a combination of central processing units, or CPUs, and graphics processing unit, or GPU, accelerators.

Common methods in quantum mechanics, such as density functional theory, estimate a molecule’s energy based on the density distribution of its electrons. In contrast, EXESS employs wave function theory, or WFT, a quantum mechanical approach that directly uses the Schrödinger equation to calculate the behavior of electrons based on their wave functions. EXESS incorporates MP2, a specific type of WFT that adds an extra layer of accuracy and includes interactions between electron pairs.

The Schrödinger equation provides a significantly higher level of accuracy in simulations. However, solving the equation requires a tremendous amount of computing power and time — so much so that these simulations had been limited to small systems. That’s in part because, as the number of atoms in the simulation increases, the time it takes to simulate them increases even more.

“Being able to accurately predict the behavior and model the properties of atoms either in larger molecular systems or with more fidelity is fundamentally important for developing new, more advanced technologies, including improved drug therapeutics, medical materials and biofuels,” said Dmytro Bykov, group leader in computing for chemistry and materials at ORNL. “This is why we built Frontier, so we can push the limits of computing and do what hasn’t been done.”

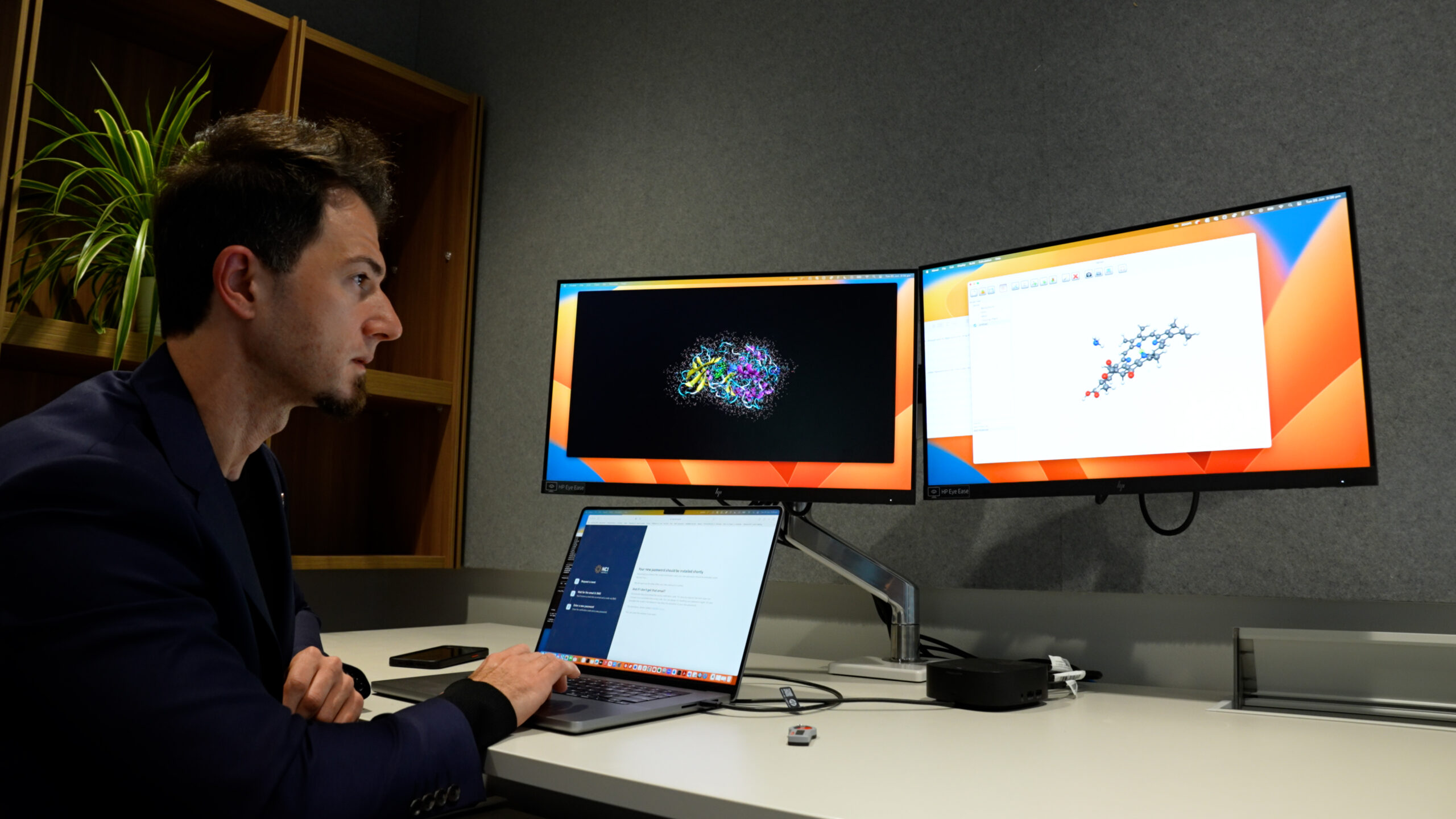

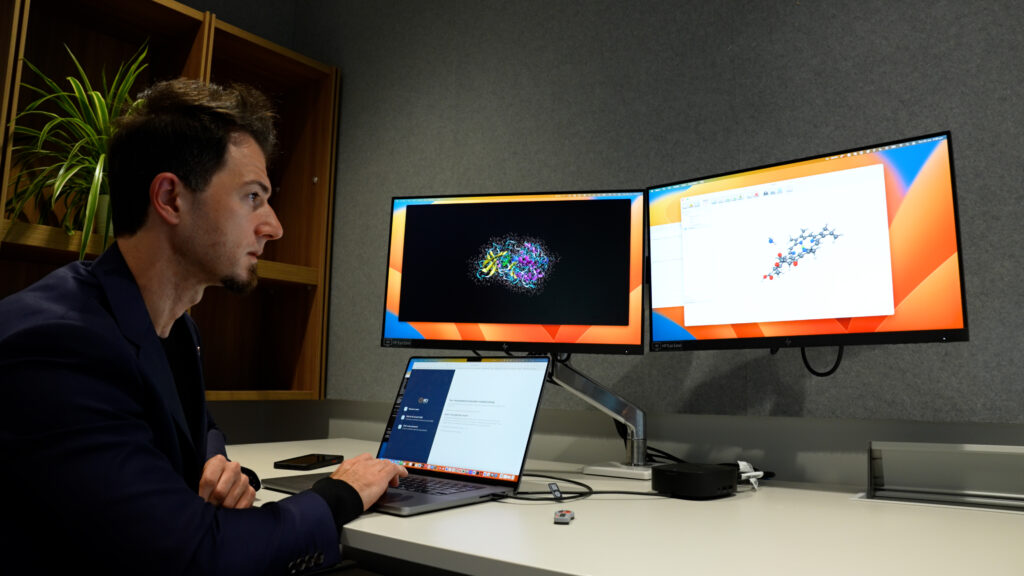

Giuseppe Barca, an associate professor at the University of Melbourne, led a team of researchers in breaking new ground in the exascale computing era. Using Frontier, the world’s most powerful supercomputer, the team conducted a molecular dynamics simulation 1,000 times greater in size and speed than any previous state-of-the-art simulation of its kind. Credit: University of Melbourne

Pushing way past the petaflop

Bykov and Barca started working together several years ago as part of the Exascale Computing Project, or ECP, DOE’s research, development and deployment effort focused on delivering the world’s first capable exascale ecosystem. The duo’s collaboration within the ECP was focused on optimizing decades-old codes to run on next generation supercomputers built with completely new hardware and architectures. Their goal with EXESS was not only to write a new molecular dynamics code for exascale machines but also to create a simulation that would put Frontier to the test.

The HPE-Cray EX Frontier supercomputer, located at the Oak Ridge Leadership Computing Facility, or OLCF, is currently ranked No. 1 on the TOP500 list of the world’s fastest supercomputers after achieving a maximum performance of 1.2 exaflops. Frontier has 9,408 nodes with more than 8 million processing cores from a combination of AMD 3rd Gen EPYC™ CPUs and AMD Instinct™ MI250X GPUs.

The team’s efforts on Frontier were a huge success. They ran a series of simulations that utilized 9,400 Frontier computing nodes to calculate the electronic structure of different proteins and organic molecules containing hundreds of thousands of atoms.

Frontier’s massive computing power allowed the research team to shatter the ceiling of previous molecular dynamics simulations at quantum-mechanical accuracy. It was the first time a quantum chemistry simulation of more than 2 million electrons had exceeded an exaflop when using double-precision arithmetic.

This isn’t the first time the team has raised the bar for these kinds of simulations. Prior to their work with Frontier, they had similar success on the 200 petaflop Summit supercomputer, Frontier’s predecessor, also located at the OLCF. In addition to being 1,000 times larger and faster, the exascale simulations also predict how chemical reactions happen over time, something they lacked the computing power to do previously.

The average run times of the simulations ranged from minutes to several hours. The new algorithm enabled the team to simulate atomic interactions in time steps — essentially snapshots of the system — with significantly improved latency compared to previous methods. For example, time steps for protein systems with thousands of electrons can now be completed in as little as 1 to 5 seconds.

Time steps are crucial for understanding how certain processes naturally evolve over time. This resolution will help researchers better understand how drug molecules can bind to disease-causing proteins, how catalytic reactions can be used to recycle plastics, how to better produce biofuels and how to design biomedical materials.

“I cannot describe how difficult it was to achieve this scale both from a molecular and a computational perspective,” Barca said. “But it would have been meaningless to do these calculations using anything less than double precision. So it was either going to be all or nothing.”

“Two of the biggest challenges in this achievement were designing an algorithm that could push Frontier to its limits and ensuring the algorithm would run on a system that has more than 37,000 GPUs,” Bykov added. “The solution meant using more computing components, and any time you add more, it also means there’s a greater chance that one of those parts is going to break at some point. The fact that we used the entire system is incredible, and it was remarkably efficient.”

On a personal note, Barca added, after he and his team had worked around the clock for weeks in preparation, the calculation that broke the double-precision exaflop barrier for scientific applications came on the last day of their Frontier allocation with the very last calculation of the simulation. It was recorded at 3 a.m. — not long after Barca had fallen asleep for the first time in a long time.

The team is currently working to prepare their results for scientific publication. After that, they plan to use the high accuracy simulations to train machine learning models and integrate artificial intelligence into the algorithm. The improvements will provide an entirely new level of sophistication and efficiency for solving even larger and more complex problems.

Support for this research came from the DOE Office of Science’s Advanced Scientific Computing Research program. The OLCF is a DOE Office of Science user facility.

UT-Battelle manages ORNL for DOE’s Office of Science, the single largest supporter of basic research in the physical sciences in the United States. DOE’s Office of Science is working to address some of the most pressing challenges of our time. For more information, visit energy.gov/science.