The “Pioneering Frontier” series features stories profiling the many talented Oak Ridge National Laboratory employees behind the construction and operation of the Oak Ridge Leadership Computing Facility’s incoming exascale supercomputer, Frontier. The HPE Cray system is scheduled for delivery in 2021, with full user operations in 2022.

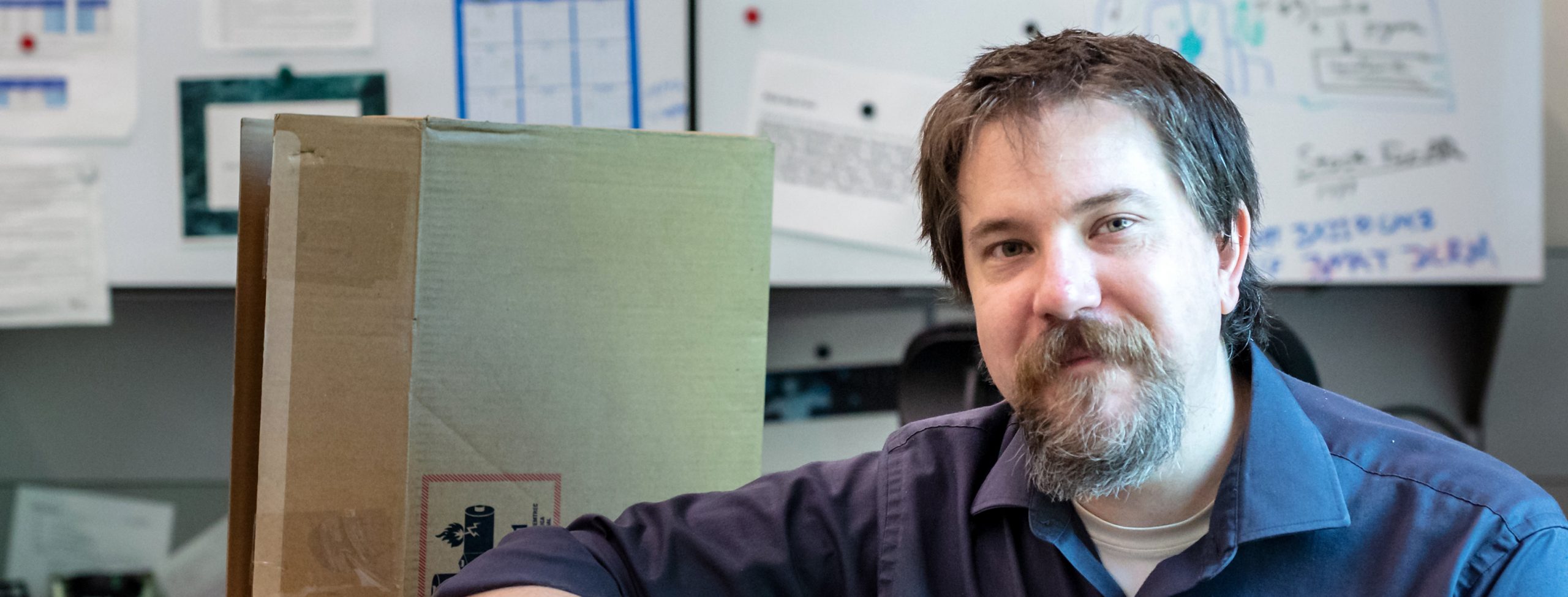

In his spartan office at the US Department of Energy’s (DOE’s) Oak Ridge National Laboratory (ORNL), sitting at his desktop terminal, Matt Belhorn has the future of high-performance computing (HPC) at his fingertips. Without his distinct expertise, the full potential of the nation’s first exascale supercomputer, Frontier, may remain untapped by busy computational scientists around the world. That’s because Belhorn is the person who installs the software.

As an HPC engineer at the DOE’s National Center for Computational Sciences (NCCS), Belhorn is responsible for installing and maintaining many of the integral programs that make the work of scientific inquiry on supercomputers possible. Frameworks, tools, libraries, compilers, debuggers—any piece of software needed by users that isn’t otherwise provided by the computer vendor is Belhorn’s domain.

“Researchers don’t want to spend their time installing software—they just want do their research. So, we make sure they have the tools and software they need,” said Belhorn, who works in the NCCS’s System Acceptance and User Environment group. “We optimize them, tuning them for our machines so that they’re better than what somebody who’s not a system administrator might whip together themselves.”

Installing software on supercomputers—especially new systems featuring the latest hardware, such as ORNL’s Summit in 2018 or Frontier later this year—is more complicated than just making sure the floppy disks are inserted in the right order, as in the computing days of yore. It requires adaptability to different architectures.

“DOE doesn’t put all their eggs in the same basket, so we have different supercomputer architectures. The difficulty with that is when you are getting your hands on a new architecture, all the codes that people have written and used in scientific computing aren’t necessarily ported to that architecture,” Belhorn said. “I don’t develop these applications, but I can dig through them and understand them. And a lot of times, we’ll find bugs in a package and just patch it.”

The upcoming Frontier machine, an HPE Cray EX system boasting new AMD EPYC™ CPUs and AMD Instinct™ GPUs, will be able to solve calculations up to 10 times faster than today’s top supercomputers. Currently being installed at the Oak Ridge Leadership Computing Facility (OLCF), a DOE Office of Science user facility at ORNL, Frontier’s performance will exceed 1.5 exaflops, or a billion-billion (1018) floating point operations per second. To help familiarize users with its new architecture, HPE has assembled an early access system at ORNL called Spock, which serves as a “mini-Frontier” that features similar components. Belhorn has been loading Spock up with software to search for potential bugs before the software is installed on Frontier.

“When we get an early access machine, scientists want to see the standard libraries and packages and utilities that they have been using as part of their workflow for years—but those packages might not be compilable. There’s going to be some pain points,” Belhorn said. “We collect the issues, point out the problems, and give that feedback back to the vendors. The software developers can iron out these things so that from day one, users of Frontier will have a complete working software environment.”

Belhorn is the NCCS’s in-house master of Spack, a package manager for supercomputers that eases the installation of scientific software. Spack allows users to build software stacks through Python, an open-source programming language that Belhorn became proficient in using while at the University of Cincinnati as a graduate student in high-energy particle physics. Working on a project that entailed moving 70-petabyte datasets to and from a supercomputer in Japan and then analyzing them, Belhorn found himself writing quite a few Python applications. When the experiment was put on hold by Japan’s 2011 Tōhoku earthquake and tsunami, he found that he could put his programming experience to work at ORNL.

Matt Belhorn is responsible for installing and maintaining many of the integral programs that make the work of scientific inquiry on supercomputers possible. Photo by Carlos Jones/ORNL.

“I’ve got a long history and a lot of experience developing applications for Python. I naturally gravitated to it. I started getting more involved in software installations using Spack because of that background, and then eventually it became what I do all day,” Belhorn said.

After a full day of software wrangling at work, you might expect Belhorn to put computing aside once he arrives home. But, in fact, one of his biggest hobbies has been automating his house with computer monitoring systems of his own design. It was inspired by a homeowner’s worst nightmare: a week after his family moved into their new house, the basement was flooded by a downpour, taking out half of their living space for a year’s worth of renovations.

Belhorn vowed that such a disaster wouldn’t happen again. Beyond installing redundant sump pumps—one on main power and the other on battery backup—and water contact flood sensors, he engineered an early warning system to track potential problems and report them to a PC dashboard. In the basement, he installed a device that monitors the status of the sump pumps as well as radon exhaust fans.

“It’s a differential pressure sensor that can tell me if the radon fan is operating or whether conditions are causing it to flow the wrong way,” Belhorn said. “The sensors measure ambient pressure in the garage and pressure underneath the house slab. For example, 4.5 pascals of negative pressure is fine. That’s a great vacuum. But if that went up to minus 0.3, minus 0.2, then I’d be concerned it’s not extracting radon gas from the entire slab.”

Outside of the house, he built his own automated weather station to warn him when conditions might lead to potential flooding.

“If it rains greater than 15 millimeters an hour for more than a couple hours, I’ve got the sensors to send me an e-mail or turn on a light and an alarm in my office at home, so I can keep an eye on it, basically,” Belhorn said, checking its status on his laptop. “Just yesterday around 4 p.m., there was 58 millimeters per hour rainfall for a very short amount of time, so not enough for even the sump pump to kick off.”

Belhorn’s system also monitors a variety of other parameters, such as the temperature and humidity throughout his house, and then stores the data for analysis. There’s nothing quite like it on the market that delivers this much information to homeowners, but he’s not interested in commercializing his creation.

“I looked to see if somebody made a device like this, but I ended up making it myself—it’s like $20 worth of materials. I’d happily publish the code for it and give it away. I’m a big fan of open-source software,” Belhorn said. “That’s why I like working here, a government-sponsored, open-science organization. I enjoy scientific discovery and learning, and it’s only useful if people can access it.”

UT-Battelle LLC manages Oak Ridge National Laboratory for DOE’s Office of Science, the single largest supporter of basic research in the physical sciences in the United States. DOE’s Office of Science is working to address some of the most pressing challenges of our time. For more information, visit https://energy.gov/science.