The “Pioneering Frontier” series features stories profiling the many talented ORNL employees behind the construction and operation of the OLCF’s incoming exascale supercomputer, Frontier. The HPE Cray system is scheduled for delivery in 2021, with full user operations in 2022.

The world’s fastest supercomputer comes with some assembly required.

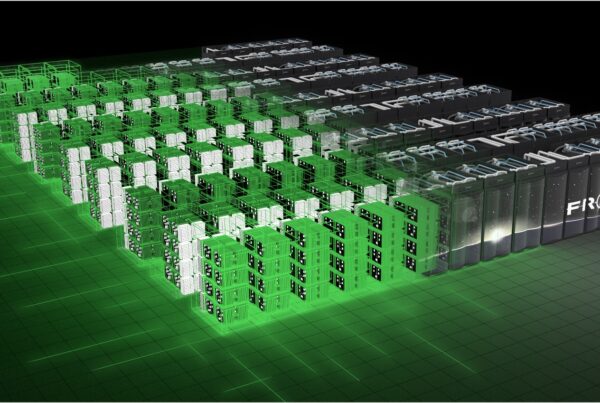

Frontier, the nation’s first exascale computing system, won’t come together as a whole until all pieces arrive at the US Department of Energy’s (DOE’s) Oak Ridge National Laboratory to be installed—with the eyes of the world watching—on the data center floor inside the Oak Ridge Leadership Computing Facility (OLCF).

Once those components operate in harmony as advertised, David Bernholdt and his team can take time for a quick bow—and then get back to work.

Bernholdt and his staff lead the effort to ensure the myriad compilers, performance tools, debuggers, and other elements of the Frontier puzzle fit together to deliver the world-leading results intended. The feeling can be like that of a pit crew fine-tuning a race car—except this car is the first of its kind, and it’s headed into the final lap as they work on it.

The HPE Cray system promises computational speeds that top 1 quintillion calculations per second—more than 1018, or a billion billion, operations—and could help solve challenges across the scientific spectrum when Frontier opens to full user operations in 2022.

“When DOE started thinking seriously about exascale, back around 2009, we wondered if it was even going to be possible to do this,” Bernholdt said. “I always figured we would find a way. Now the technology has evolved to the point that it’s not nearly as scary to build and program and debug as we thought it would be, and we’re coming down to the finish line. It’s been quite a ride.”

Getting Frontier to the finish line for his team includes interacting with users—the scientists and engineers eager to enter their codes and kick off high-speed simulations of everything from weather patterns and stages of cancer to nuclear reactions and collapsing stars—and the corporate vendors working around the clock to meet specifications and deliver a first-of-its-kind product. Because the OLCF encourages standards-based programming environments when feasible, the team also works closely with a variety of standards organizations to ensure those standards reflect the needs of users and maximize support for the vendors’ hardware capabilities.

“It’s sometimes daunting to remember: This is Serial No. 1,” Bernholdt said. “They’ve created this machine just for us, and even the best hardware and software are not going to be perfect, at least not right out of the box. A large part of what we do is try to understand what users will need from the system to use it effectively and help them represent that in their codes.

“At the same time, we’re working with our vendors and compilers to make sure their solutions implement the standards we need and provide the necessary performance on the system. We have to make sure the vendors are providing enough detailed, granular information about the system in a timely enough fashion that the software developers can take advantage of it, and we have to make sure the languages have evolved enough to carry out the tasks. There are a lot of moving parts, and they’re only moving faster as we go.”

Bernholdt’s scientific background prepared him for the mission. He spent his early research career in computational chemistry and helped develop NWChem, a scalable software package for computational chemistry still used worldwide. Plans call for a revamped version of the package to run on Frontier and other exascale supercomputers, such as Aurora.

Bernholdt later moved into computer science and software development to design and refine tools that tackle some of the same problems he encountered as a scientific user. He and his team helped program and debug Frontier’s supercomputing predecessors: Titan (27 petaflops, or 27 quadrillion calculations per second) and Summit (200 petaflops, or 200 quadrillion calculations per second).

“This is our third accelerator-based machine, so we have a reasonably good idea of how to program these,” Bernholdt said. “The biggest single challenge has been the schedule. We’ve had maybe half the time to get Frontier ready that we had for Summit, and it’s a newer software stack that’s kept everybody scrambling. But that means more opportunities and incentives for optimization.”

That ‘round-the-clock work won’t end when Frontier switches on. Bernholdt and his team will continue monitoring the supercomputer’s performance and looking for ways to raise standards and boost performance.

“It never stops,” Bernholdt said. “It’s always really satisfying to see people able to use the system to good effect, but that’s not the ending. Frontier will continue to evolve and improve, and we’ll be part of that. I feel pretty confident saying there is no other place on earth right now that could support a similar project of this scale and importance.”

UT-Battelle LLC manages Oak Ridge National Laboratory for DOE’s Office of Science, the single largest supporter of basic research in the physical sciences in the United States. DOE’s Office of Science is working to address some of the most pressing challenges of our time. For more information, visit https://energy.gov/science.