The computational users running scientific codes on the Oak Ridge Leadership Computing Facility’s (OLCF’s) 200-petaflop IBM AC922 Summit supercomputer are generating more data than ever. To handle this data explosion, each Summit compute node is equipped with a solid-state storage device (SSD) that provides a fourfold speedup in the write performance from the parallel file system (PFS). To allow users to take seamless advantage of this localstorage, the OLCF’s Technology Integration (TechInt) Group is providing new software libraries, Spectral and SymphonyFS.

When supercomputers run huge jobs in fields such as chemistry, biology, physics, and climate science, they must periodically “save” the data that’s already been generated. This checkpointing process works similarly to how documents are periodically saved in word processing programs—but on a much more massive scale.

Summit’s IBM Spectrum Scale PFS boasts an impressive peak write speed of 2.5 terabytes per second (TB/s), which is the equivalent of 100 Blu-ray™ movies per second. The compute node–local SSD provides up to 9.7 TB/s aggregate write performance—nearly four times that of the PFS. For machine learning applications, the SSD achieves an even greater increase—more than 26.7 TB/s of read performance, or a tenfold increase from the PFS.

The ability to take advantage of the local SSD storage can benefit many applications. Various solutions already exist that require users to modify their codes to send their data to local storage first and eventually transfer it Spectrum Scale. But the TechInt team sought to provide a novel way of handling the issue without users having to make any changes to their codes. Enter Spectral and SymphonyFS.

New storage tools to save the data

With Spectral and SymphonyFS, not only will users be able to transparently leverage Summit’s local storage, but also their data will then be seamlessly moved to the file system. Although similar, the tools were built for slightly different use-case scenarios.

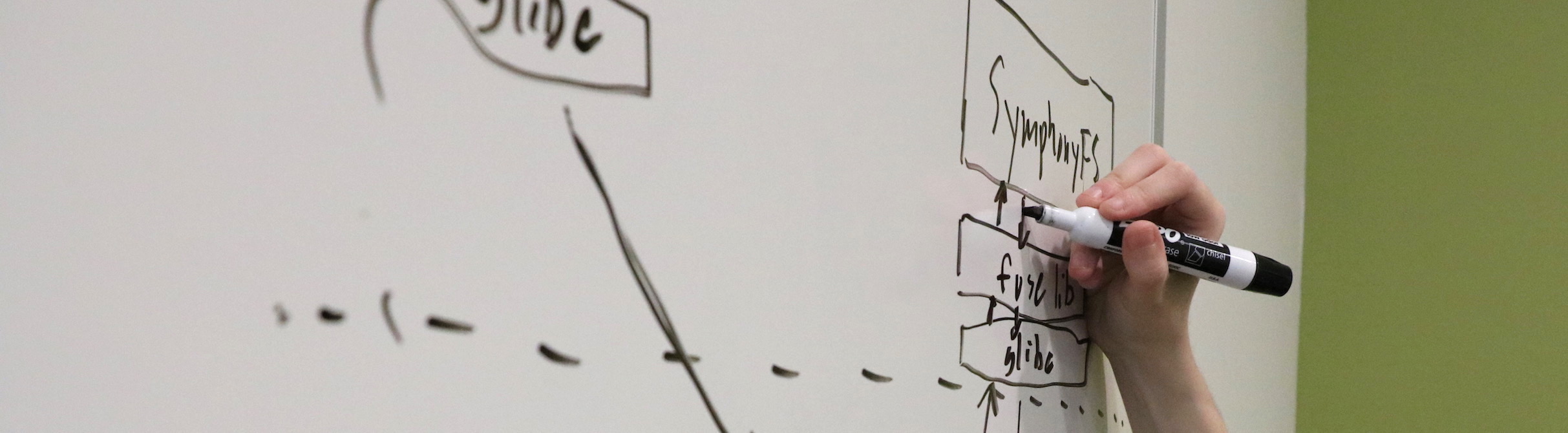

Spectral currently caters to scientific applications that use a file per process (FPP) method of writing data, in which each process writes data to a separate file. SymphonyFS caters to applications that use a single shared file (SSF), in which data pieces are written to the same file.

Developed in 2016, Spectral exists as a transparent intercept library. These libraries work by intercepting and then carrying out system instructions. After a user has written a chunk of data to the local storage, the data file is closed so that the application can move on to saving the next chunk. Spectral intercepts this “close” call and copies the data to the Spectrum Scale PFS. The process, which is ideal for FPP jobs, works similarly to a relay race in which one person passes a baton to the next person, who completes the race.

“Spectral can copy this data to IBM Spectrum Scale automatically on behalf of the user,” said Chris Zimmer, Spectral developer and HPC systems engineer in TechInt.

When it comes to SSF jobs, however, SymphonyFS is the tool of choice.

“While many of our applications use a file per process method, there are a significant number that rely on shared files, necessitating a tool like SymphonyFS to assist them,” said Chris Brumgard, SymphonyFS developer and HPC systems engineerin TechInt.

Developed in 2018, SymphonyFS is based on the software interface FUSE, or File system in User SpacE. SymphonyFS presents a single, coherent file system to the user by presenting a combined file system on top of the local storage and IBM Spectrum Scale. During a write, the operating system takes a request and forwards it to SymphonyFS, which handles writing the data to the local SSD. Before the job ends, SymphonyFS will ensure that the data has been pushed from the local SSD to the Spectrum Scale file system outside of the scientific application.

Spectral is available on Summit today for beta testing, and TechInt expects that SymphonyFS will be integrated into Summit this year after internal OLCF security auditing.

The OLCF is a US Department of Energy (DOE) Office of Science User Facility located at DOE’s Oak Ridge National Laboratory.

UT Battelle LLC manages ORNL for DOE’s Office of Science. The Office of Science is the single largest supporter of basic research in the physical sciences in the United States and is working to address some of the most pressing challenges of our time. For more information, please visit https://science.energy.gov