Smashing particles at near light speed is one thing. Aggregating enough storage and computing power to sort through the aftermath is quite another.

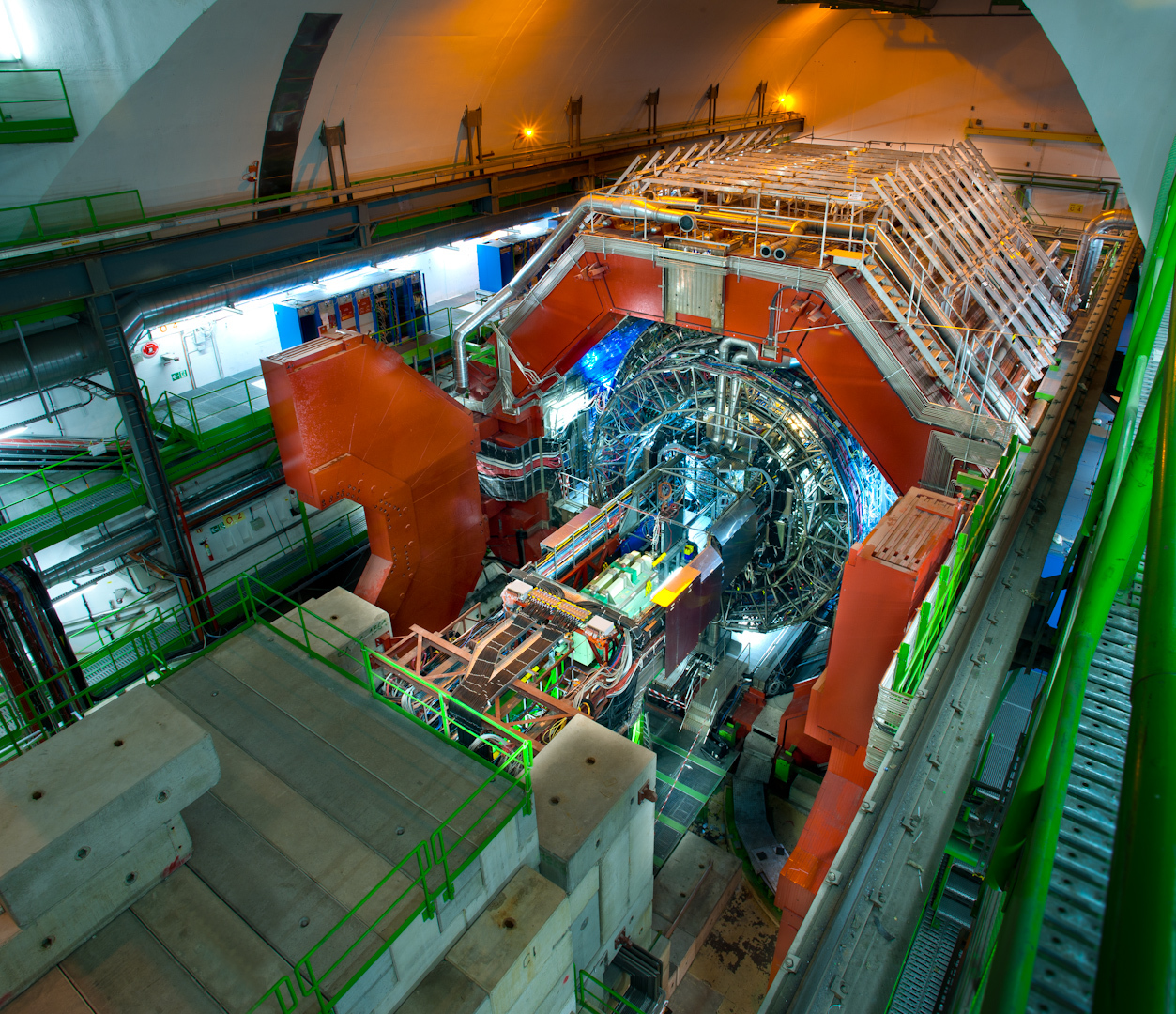

For the former, scientists have the Large Hadron Collider (LHC)—the world’s largest particle accelerator—managed by CERN, the European Organization for Nuclear Research. For the latter, they have the CERN-administered Worldwide LHC Computing Grid (WLCG), a global network of computing resources dedicated to storing, distributing, and analyzing data from LHC experiments.

Since 2015, the US Department of Energy’s (DOE’s) Oak Ridge National Laboratory (ORNL) has contributed to the WLCG, providing compute and storage resources for the ALICE experiment. ALICE, an LHC experiment dedicated to recreating primordial matter as it existed microseconds after the Big Bang, is helping scientists understand how fundamental particles form and interact and how they create the mass in protons.

As a member of ALICE-USA, ORNL is one of the primary organizations helping DOE fulfill its computing commitment to ALICE. The arrangement has lent ORNL expertise to CERN’s computing grid and strengthened collaborative ties among ORNL, CERN, and computing centers around the world.

“DOE’s collaboration with CERN and the European high-energy physics community mutually furthers institutional research objectives. Over time, the sharing of data storage tools and expertise has provided value to scientific projects and users at ORNL and other research facilities,” said ORNL systems architect Pete Eby.

At ORNL, dedicated ALICE resources are housed within the Compute and Data Environment for Science (CADES), staffed by members of the Oak Ridge Leadership Computing Facility’s (OLCF’s) Advanced Data and Workflow Group. Currently, the CADES–ALICE site consists of 1.5 petabytes of storage and 1,500 computing cores for around-the-clock analysis, with plans for increasing both in the years ahead. In addition to providing CERN with dedicated storage and computing resources, the CADES–ALICE site also leverages the lab’s high-performance network to shuttle data to CERN data centers in Geneva, Budapest, and other WLCG sites. With a peak of 40 gigabits a second, the network is one of the most robust on the grid despite the transatlantic connections. Network improvements will soon connect ORNL and CERN sites over a 100-gigabit data network provided by DOE’s ESNet and dedicated to high-energy physics experiments.

“At any given moment, the grid is handling about 120,000 jobs,” Eby said. “It takes a lot of data to keep 120,000 jobs happy. Our proximity to high-performance networking allows us to provide data to grid sites even though geographically we’re an awful long way from them.”

Eby and ORNL systems architect Michael Galloway serve as the primary administrators of the CADES–ALICE facility. Both played instrumental roles in the design and deployment of a resource that incorporates the most current data management technology. Examples of innovation at the site include the adoption of ZFS as the center’s base file system to maximize data flexibility and protection. Additionally, ORNL is among the first grid sites to use a CERN-developed storage solution called EOS, which provides increased control over data management, resiliency, and availability across grid centers.

The ORNL team worked closely with CERN and vendors to get the disk-based, low-latency storage service designed and deployed, and they presented the architecture at the international ALICE Tier 1/Tier 2 workshop in Turin, Italy. Furthermore, the team contributed to an EOS frequently asked questions document to help other sites adopt the software.

“As an early adopter of EOS, ORNL could critically assess all aspects of the product—from documentation and deployment to operation and runtime problems,” said ALICE distributed computing coordinator Latchezar Betev, who works at CERN. “The expertise of the ORNL team has helped computing centers around the world improve their infrastructure quality and operations efficiency.”

Eby said the team’s experience with EOS has sparked exploration into applying the software toward ORNL and DOE projects, especially collaborations where large datasets are shared between institutions. “There are commercial solutions that do this, but they are expensive, particularly at multi-petabyte scale,” he said. “EOS is an open-source project designed for geographic data replication over high-latency networks, which other research institutes can participate in.”

Another promising development stemming from ORNL’s ALICE involvement is the software distribution service CernVM Files System (CVMFS), a tool developed by CERN to distribute and centrally update software on all grid sites simultaneously. “This portable software environment is something we think could be very beneficial to other science communities, especially when paired with a technology like container management systems,” Eby said. “There are use cases where CVMFS can simplify software delivery between cloud and traditional compute environments, providing reproducible tools and environments between facilities and across computing environments.”

With plans to upgrade the LHC in the next few years, CERN is projecting an exponential increase in the amount of data to analyze. One avenue being explored to meet this challenge is the use of supercomputers, such as the OLCF’s 27-petaflop Titan supercomputer. Researchers have made preliminary inroads at the OLCF and Lawrence Berkeley National Laboratory’s National Energy Research Scientific Computing Center—another DOE high-performance computing (HPC) center co-located with the second ALICE-USA Tier 2 site—to explore this feasibility.

“There is a growing recognition that the big HPC centers have resources we really should look at leveraging,” said Jeff Porter, ALICE-USA project lead. “The fact that ALICE-USA has two Tier 2 centers housed next to these big machines is an important advantage for the future.”

Oak Ridge National Laboratory is supported by the US Department of Energy’s Office of Science. The single largest supporter of basic research in the physical sciences in the United States, the Office of Science is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov.