Nearly 500 attendees from universities, energy and IT companies participated in the Rice University Oil and Gas HPC Workshop in Houston.

Contingent visits Texas for latest in oil/gas HPC

While researchers investigating alternative energy technologies such as wind and solar power are embracing modeling and simulation via HPC, the more traditional oil and gas companies are the real “power” users in the energy industry. For decades HPC has provided a valuable return on investment to those firms in the areas of advanced seismic processing and reservoir modeling.

To address both the promise and potential shortcomings of current and emerging HPC technologies as they relate to oil and gas industry requirements, the Ken Kennedy Institute for Information Technology at Rice University hosted its annual Oil and Gas HPC Workshop on March 6 in Houston, Texas.

In its seventh year, the workshop bills itself as “the premier meeting place for networking and discussion focused on computing and information technology [IT] challenges and needs in the oil and gas industry.” Nearly 500 attendees from energy and IT companies and universities participated in 2014.

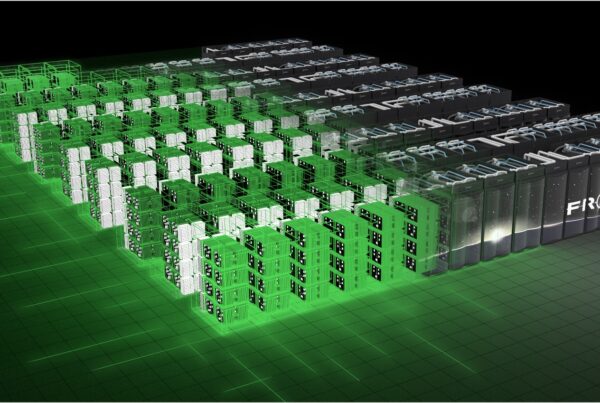

Given that the larger oil and gas firms are leading the broader industrial community in the use of petaflop systems and will need to prepare for exascale capability, Suzy Tichenor, director for Industrial Partnerships for the Oak Ridge Leadership Computing Facility, reached out to the workshop’s Program Committee and suggested that ORNL researchers could add a valuable perspective to the agenda. The committee agreed and, impressed by their submitted abstracts, invited David Bernholdt and Scott Klasky to speak.

ORNL’s Computer Science Research Group Leader Bernholdt gave a plenary talk titled “Some Assembly Required? Thoughts on Programming at Extreme Scale,” which served as an overview of current research directions in programming environments at ORNL and the broader Department of Energy (DOE) community, in particular those targeting computational science and engineering applications on leading computer systems. He also touched on work in programming languages and related tools, communication middleware, operating systems, fault tolerance, and the engineering of scientific software.

And ORNL’s Scott Klasky spoke on “Programming Models, Libraries, and Tools: Research and Development into Extreme Scale I/O Using the Adaptable I/O System (ADIOS),” in which he addressed the disparity in I/O and compute capability, particularly as they apply to the exascale. Klaksy is part of a team that developed ADIOS, which provides the highest level of synchronous I/O performance for a number of mission-critical applications at various DOE Leadership Computing Facilities, and as a result, won an R&D 100 award in 2013.

“It is critical for DOE researchers to understand the complex space of problems which arises in the oil and gas industry, and participating in this workshop allowed me to have a deeper understanding of the challenges that this field is going through as its data gets richer in size and complexity,” said Klasky. “My hope is that we can collaborate together in the future, and workshops like this allow us to communicate and exchange ideas and are very valuable to our scientists.”

“While many of the attendees were aware that Oak Ridge had large-scale HPC systems, they were not as familiar with the corresponding deep computer science expertise we have or the opportunity to engage with us on common research topics,” said Tichenor. “This was a terrific opportunity to introduce them to our world-class talent and invite them to explore the tools we have developed.”