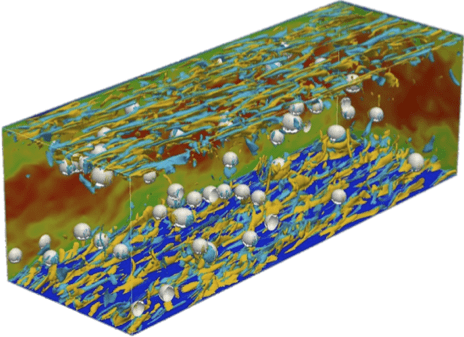

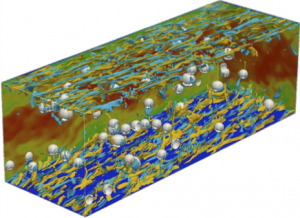

High-fidelity simulation of bubbly flow between parallel plates. Turbulent structures are shown in yellow and cyan; bubbles are shown in light gray. Image Credit: Igor Bolotnov

Titan enables next-gen models for existing, tomorrow’s fission reactors

As America’s energy consumption continues to increase, all options are necessary to fulfill our energy needs.

Currently, roughly 20 percent of America’s energy production comes from nuclear power, a number that could increase if we wish to continue in our desire to maximize our output while decreasing emissions from fossil fuels.

The battle for abundant and affordable nuclear power takes place on two fronts: extending the life and improving the performance of existing reactors and developing new, more efficient reactors with unprecedented safety margins.

And few physical processes are more important to both fronts than boiling water, perhaps the most crucial element of the power production process. In light-water reactors (the most common type of nuclear reactor), fuel rods heat water that is used to produce steam, which then passes through turbines to generate electricity. In some reactors, the water used to cool the fuel rods boils and produces steam directly, while in others the coolant water is at a higher pressure that prevents boiling, with a lower-pressure intermediate stage where boiling produces steam.

Regardless of the specifics of the design, understanding the boiling process is critical, since the transfer of heat from the fuel rods to the coolant is central to both the efficiency and safety of the reactor. And when water boils, it makes bubbles, which means in order to understand boiling, you have to understand bubbly flow, or both liquid water and steam mixing together.

To increase efficiency and enhance safety, a team led by Igor Bolotnov of North Carolina State University has turned to Titan, the Oak Ridge Leadership Computing Facility’s Cray XK7 supercomputer, to perform state-of-the-art direct numerical simulation (DNS) to characterize such turbulent bubbly flows. Titan’s scalable architecture and large total memory enable these intensive calculations. To simulate boiling to the point of predictability, researchers need a powerful computer, and Titan is about as powerful as they come.

However, even Titan has its computational limits and the simulation challenges of such complex multiphase fluid dynamics are immense. Because these calculations are so complex, researchers need to know where to make the necessary approximations in simulating the boiling process. And by comparing single-phase (i.e., liquid-water alone) and multi-phaseturbulent flow, Bolotnov’s team is getting closer every day.

The accuracy and precision of Bolotnov’s simulations provide fundamental data on the nature of turbulence in these systems that, when analyzed, allows for the proposal of new and improved models of bubbly flows that are much less expensive than DNS simulations; specifically more accurate, lower-fidelity, (and less computationally expensive) models.

Furthermore, using DNS on high-performance computing resources also means researchers can vary conditions such as higher pressures, the flow between a rod and a rector, etc., quickly and cheaply, something that would be impossible with experiment alone.

“We want to be able to predict the behavior of the boiling,” said Bolotnov, whose team’s work was featured in the April 3, 2013 issue of Journal of Fluids Engineering. “With simulation, you can experiment with any temperature and any pressure,” thus greatly expediting the discovery process and, by extension, bringing novel reactor designs to market as quickly and as cheaply as possible.

Essentially, Bolotnov’s team, which includes Kenneth E. Jansen of the University of Colorado at Boulder and Michael Z. Podowski of Rensselaer Polytechnic Institute, is developing models for different reactor designs, both existing and in-the-works. Their parallel flow solver PHASTA has utilized up to 46,000 of Titan’s CPU cores to study small-scale, individual bubble formation and evolution and how these bubbles affect the surrounding liquid.

For existing reactors, the goal is to increase fuel usage and produce more power. When that happens, boiling becomes more intense and safety becomes paramount, particularly in aging reactors.

For new designs, physical experiments are both expensive and hazardous, making accurate models paramount to efficient and rapid advanced reactor deployment. With simulation, problematic designs can be weeded out quickly, before expensive prototypes are tested.

“The research is an important investment in the sense that you can improve [the] existing fleet and improve the design of future fleets,” said Bolotnov, adding that higher-fidelity simulations can also allow nuclear reactors to operate with increased efficiency due the improved models.

While a typical light-water reactor has around 50,000 individual fuel rods, Bolotnov is studying flow around a single rod in order to improve models that can be used to develop more advanced, safer reactor designs.

The results will help determine if current turbulence models are relevant to bubbly flow applications or if there is a necessity to apply and develop more complex Reynolds-stress turbulent models, which track the turbulent fluctuation energy in all directions, for two-phase computational fluid dynamics modeling.

Pick a (Reynolds) number

The team’s research is funded by Oak Ridge National Laboratory’s Consortium for the Advanced Simulation of Light-Water Reactors (CASL). DOE’s first energy innovation hub, CASL is developing models and tools to predict the performance of today’s nuclear reactors through comprehensive, science-based modeling and simulation technology that is deployed and applied broadly throughout the nuclear energy industry to enhance safety, reliability, and economics.

Boiling is a primary focus toward these aims; or, more specifically, the turbulence caused by the boiling process. “Turbulence has long been thought of as somewhat mysterious,” said Bolotnov.

“The good news is that if you have the computational power you can use basic, fundamental laws to resolve the fluctuations. Oftentimes the simulation data is even more useful than that from experiments because you can see things develop more clearly,” he added.

Due to the computationally complex characteristics of turbulence, large Reynolds numbers and small meshes are required, and this requires more powerful computers for solution. The Reynolds number describes the relationship between inertial and viscous forces.

Because turbulence happens on numerous scales simultaneously (from a .1 mm bubble in the team’s simulations to the full evolution of a 2.5mm bubble), smaller meshes are necessary. However, smaller meshes require more memory, so with 710 terabytes Titan is a perfect match.

Even with a computer the size of Titan, however, researchers still have to make approximations. In order to get the best results possible within the computational limitations, Bolotnov’s team has employed adaptive mesh control, or the ability to automatically adjust grid resolution (and therefore accuracy) in certain regions of the simulation while it is evolving. This gives researchers a more complete picture of an extremely complicated process.

Next, the team plans on tweaking PHASTA so that it can take advantage of Titan’s GPUs, a step that will allow it to perform multiphase flow simulations for pressurized water reactor geometries such as spacer grids at realistic velocities. Spacers in the fuel bundles separate the individual rods and have a very complex geometry to enhance single and two-phase heat transfer between nuclear fuel rods and the coolant flow. Modeling spacers accurately would require very large meshes (on the order of 10 billion elements) and significant computational resources. The team is developing advanced analysis tools to ensure that it can take full advantage of the detailed information such simulations will provide.

“It’s extremely important to develop tools to extract new data and knowledge,” said Bolotnov, adding that higher Reynolds numbers and higher numbers of bubbles are also in the team’s future plans.

The team hopes to simulate an entire reactor core at some point in the near future. As PHASTA is modified to harness Titan’s GPUs, the models will evolve and become increasingly precise, eventually helping to guide reactor design and secure America’s energy future.

Related Publication:

I.A. Bolotnov, “Influence of Bubbles on the Turbulence Anisotropy,” Journal of Fluids Engineering 135(5), 051301 (Apr 03, 2013), doi: 10.1115/1.4023651