HPC experts meet to discuss current and future computing and data technologies

Supercomputing experts from around the world used a recent meeting in the Tennessee Smokies to grapple with the challenges of “big data.”

Sponsored by Oak Ridge National Laboratory’s (ORNL’s) Computing and Computational Sciences Directorate, the annual Smoky Mountains Computational Sciences and Engineering Conference brought together approximately 100 key high-performance computing (HPC) players from government, academia, and industry.

Sponsored by Oak Ridge National Laboratory’s (ORNL’s) Computing and Computational Sciences Directorate, the annual Smoky Mountains Computational Sciences and Engineering Conference brought together approximately 100 key high-performance computing (HPC) players from government, academia, and industry.

The goal of this year’s meeting, held in Gatlinburg, Tennessee, was to advance understanding of state-of-the art technologies in HPC, simulation science, and data analytics and the integration of these symbiotic disciplines into science and engineering technologies. The conference was broken up into three sessions.

Barney Maccabe, director of ORNL’s Computer Science and Mathematics Division, kicked off the first session by talking about the architecture of the computing, data, and communication infrastructures needed to support science applications.

“We want to learn what the architecture of computing and data integration might look like by asking the right questions,” Maccabe said. “For instance, where do we place computing in a data infrastructure to reduce the amount of data moving through the system?”

To help answer this question, Maccabe brought along Boston University’s Orran Krieger, the founding director of the Center for Cloud Innovation.

Krieger discussed the Massachusetts Open Cloud Initiative—a cloud infrastructure that would allow for a large community of researchers, innovators, and industry participants to work together and develop their own computing and data architectures in the cloud.

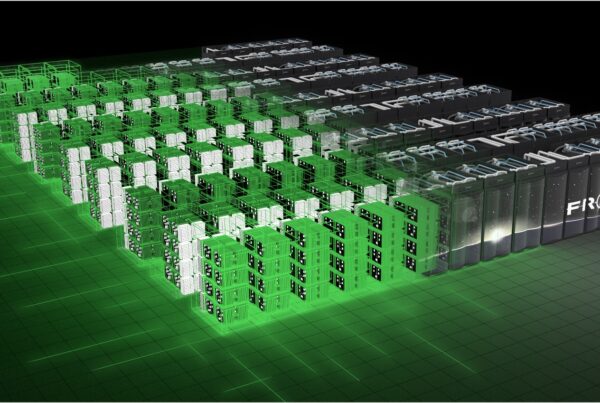

The second session leader, Galen Shipman, provided a look at the vendor ecosystem that would develop infrastructures for HPC and big data integration in the future.

“During our session we wanted to highlight how large-scale systems for HPC and big data provide the foundational infrastructure to support science and engineering,” Shipman said.

Industry vendors—Pete Ungaro of Cray, Bill Dally of NVIDIA, Al Gara of Intel, and Jorge Titunger of SGI—discussed their companies’ roadmaps in tackling this challenge.

In the final session, Oak Ridge Leadership Computing Facility (OLCF) Director of Science Jack Wells spoke on “Delivering Breakthrough Science.”

“HPC has advanced to the point that integration with complex data sets is the norm, which brings simulation and data analytics together,” said Wells. “These two disciplines can be combined to reach goals, impact society, and allow for breakthrough science.”

ORNL researchers and UT Governor’s Chairs Suresh Babu and Jeremy Smith joined Wells for the session.

While Babu talked about his breakthrough research in additive manufacturing, also known as 3D printing, and how it promises to revolutionize manufacturing, Smith showed how intertwining neutron and computational sciences benefits his research.

“It is important to see some of the current successful models that integrate computing and data techniques,” said Wells. “In doing so, we hope to hear from the community what requirements are needed for even greater integration in the future.” —by Austin Koenig