Collaborators work to improve certainty of ice, Earth, atmosphere, and ocean simulations

ORNL climate scientist Kate Evans (left) and computer scientist Patrick Worley discuss ways to improve a climate simulation projected on the EVEREST visualization wall. By scaling up climate codes, researchers are able to simulate individual climate components in higher resolution and get more precise results. Photo credit: Jason Richards, ORNL

Researchers at Oak Ridge National Laboratory (ORNL) are sharing computational resources and expertise to improve the detail and performance of a scientific application code that is the product of one of the world’s largest collaborations of climate researchers. The Community Earth System Model (CESM) is a mega-model that couples components of atmosphere, land, ocean, and ice to reflect their complex interactions. By continuing to improve science representations and numerical methods in simulations, and exploiting modern computer architectures, researchers expect to further improve the CESM’s accuracy in predicting climate changes. Achieving that goal requires teamwork and coordination rarely seen outside an orchestra melding diverse instruments into a single symphonic sound.

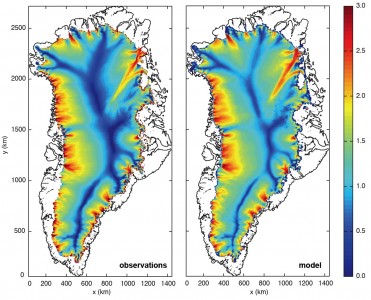

“Climate is a complex system. [We’re] not solving one problem, but a collection of problems coupled together,” said ORNL computational Earth scientist Kate Evans. Of all the components contributing to climate, ice sheets such as those covering Greenland and Antarctica are particularly difficult to model—so much so that the Intergovernmental Panel on Climate Change (IPCC) could not make any strong claim about the future of large ice sheets in its 2007 Assessment Report, the most recent to date.

Evans and her team began the Scalable, Efficient, and Accurate Community Ice Sheet Model (SEACISM) project in 2010 in an effort to fully incorporate a three-dimensional, thermomechanical ice sheet model called Glimmer-CISM into the greater CESM. The research is funded by the Department of Energy’s (DOE’s) Office of Advanced Scientific Computing Research (ASCR). Once fully integrated, the model will be able to send information back and forth among other CESM codes, making it the first fully coupled ice sheet model in the CESM.

Through the ASCR Leadership Computing Challenge, the team of computational climate experts at multiple national labs and universities received allocations of processor hours on the Oak Ridge Leadership Computing Facility’s (OLCF’s) Jaguar supercomputer, which is capable of 2.3 petaflops, or 2.3 thousand trillion calculations per second.

Evans said the team is on track to have the code running massively parallel by October of 2012. Currently, simulations of a small test problem have employed 1,600 of Jaguar’s 224,000 processors. Evans said the team expects that number to expand substantially in the near future when they begin simulating larger problems with greater realism.

The SEACISM project will improve the fidelity of ice-sheet models. The simulated (right) versus observed (left) flow velocities for Greenland ice sheets match well. Simulation data from Steve Price, Los Alamos National Laboratory; observational data from Jonathan Bamber and colleagues in Journal of Glaciology, 46, 2000.

The CESM began as the Community Climate Model in 1983 at the National Center for Atmospheric Research (NCAR) as a means to model the atmosphere computationally. In 1994, NCAR scientists pitched to the National Science Foundation (NSF) the idea of expanding their model to include realistic simulations of other components of the climate system. The result was the Climate System Model—adding land, ocean, and sea ice component models—which was renamed the Community Climate System Model (CCSM) to recognize the many contributors to the project. Development of the CCSM also benefitted from DOE and National Aeronautics and Space Administration (NASA) expertise and resources.

The CCSM developed into the CESM as its complexity increased; and today the model is a computational collection of the Earth’s oceans, atmosphere, and land, as well as ice covering land and sea, that calculates in chorus with its components. “[The model] is about getting a higher level of detail, improving our accuracy, and decreasing the uncertainty in our estimates of future changes,” said Los Alamos National Laboratory climate scientist Phil Jones, who leads that laboratory’s Climate, Ocean, and Sea Ice Modeling group. The group develops the first-principles ocean, sea ice, and ice sheet components of CESM; and its members are interested in sea-level rise, high-latitude climate, and changes in ocean thermohaline circulation—aqueous transport of heat and minerals around the globe.

The team is changing its ocean code, the Parallel Ocean Program, to become the Model for Prediction Across Scales–Ocean (MPAS-Ocean) code. Unlike the Parallel Ocean Program, MPAS-Ocean is a variable-resolution, unstructured grid model, which will allow researchers to sharpen simulation resolution on a regional scale when they want to look at climate impacts in particular localities.

The CESM has continually grown in intricacy, enabling researchers to calculate more detail over larger spatial scales and longer time scales. Further, researchers are able to introduce more complex physics variables and simulate in greater detail Earth’s biogeochemical components—chemical and ecosystem impacts on the climate. But these advances do not come without a price.

“At any given time when we’re integrating the model forward, it’s very [computationally] expensive—very time consuming and using large amounts of memory,” Evans said. “It all has to work in concert to generate huge amounts of data that we then need to analyze afterward.”

Conducting climate codes

Researchers and code developers for the CESM are scattered around the United States. One of their biggest challenges is tying together separate climate code components created in different places on different computer architectures. What’s more, climate researchers usually focus on a specific aspect of the climate, such as ocean or atmosphere. An atmosphere scientist, for example, needs the ability to raise resolution in the atmosphere but may want to lower the resolution used in the ocean to minimize the cost of the simulation. ORNL computer scientist Patrick Worley helps researchers optimize their codes, making him one in a small group in the CESM community who specialize in conducting the climate code orchestra.

“There are many scientific issues with getting simulations right, and the computer scientists are involved with helping the scientists test and optimize them,” said Worley, who serves as a co-chair in the CESM software engineering working group dedicated to solving the unique challenges climate research imposes on computing resources.

A number of computational issues distinguish climate science from other scientific disciplines that make heavy use of simulations, Worley said. First, if researchers want to change their problem size, they must rework the simulations’ new physical processes in correspondingly increased or decreased resolution. Second, many researchers focus only on particular areas of the Earth during their simulations, meaning they need only a particular part of the CESM framework running in high resolution. Finally, climate simulations run at varying time scales, sometimes spanning several thousand years. The required time to solution and available computing resources can force researchers to choose between high spatial resolution in their models or an extended observation period.

According to Evans, computer scientists like Worley help the climate scientists deal with that complexity in the coupled model. “Pat does bridge across many components. He can look at an ice code, which solves very different equations and is structured in a very different way than an atmospheric model, and be able to help us run both systems not only individually at their maximum ability but coupled together,” she said. “There are few in the climate community that have an understanding of how all of it works.”

A hybrid horizon

With software improvements and increasingly more powerful supercomputers, resolution and realism in climate simulations have reached new heights, but there is still work to be done. “What we can’t do yet in the current model is get down to regional spatial scales so we can tell people what specific impacts are going to happen locally,” said Jones, “The current IPCC simulations are at coarse enough resolution that we can only give people general trends.”

Ocean simulations that include ecosystems are important for determining how much carbon the oceans can sequester in current and future climates. Chlorophyll, shown in green, is a useful measure of biological activity that can be compared with satellite ocean color measurements. Image credit: Matthew Maltrud, Los Alamos National Laboratory

Climate research concluded last year makes up the final pieces of information that will go into the next IPCC Assessment Report, due for release in 2013. Meanwhile, climate researchers are preparing for the future. “The climate research community doesn’t have a single climate center where they run all of their climate simulations—they run wherever there is supercomputing time available—so it’s important for the codes to run on as many different platforms as possible,” Worley said. Currently, US climate codes are running on National Oceanic and Atmospheric Administration, NASA, DOE, and NSF supercomputing resources.

One of the biggest challenges facing climate research, according to Worley, is writing the computational score for hybrid architecture harmonies. Next-generation supercomputers, such as the OLCF’s Titan, a 10–20 petaflop machine, will use both central processing units and graphics processing units to share the computational workload. This novel approach will require closer attention to all levels of parallelism and will alter the approach to computing climate. “There are a number of people that want to make sure we are not surprised by the new machines,” Worley said. If all goes well, CESM researchers may hear calls for an encore.—by Eric Gedenk