Researchers using the OLCF’s computational resources to simulate subsurface flows were recently awarded best paper at the 31st annual International Symposium of the Society of Core Analysts meeting. Image courtesy of SPWLA, Ryan Armstrong, Maja Rücker, Steffen Schlüter, James McClure, and Mark Berrill

For several years, a team led by Virginia Tech’s James McClure has been using computation to study subsurface multiphase flows. Researchers studying multiphase flows are essentially studying the interaction of flowing materials that either are in different phases (solids, liquids, or gases) or have chemical makeups that prevent mixing (such as oil and water).

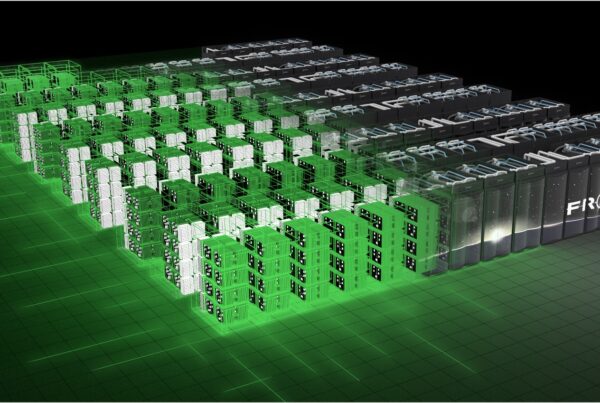

In its previous work, the team created a computational framework to study these complex subsurface interactions in greater detail. Recently, the team was awarded more computing time on high-performance computing (HPC) resources at the Oak Ridge Leadership Computing Facility (OLCF), a US Department of Energy (DOE) Office of Science User Facility located at DOE’s Oak Ridge National Laboratory, to take this process one step further.

A paper the team wrote on the expanded work, which involves using experimental data from synchrotron particle accelerators, was recently named best paper at the 31st annual International Symposium of the Society of Core Analysts (SCA)—a professional organization dedicated to highlighting scientific research into rock and core analysis.

“A huge amount of data can be generated from experimental resources,” McClure said. “You need to have strategies to take this raw data and extract useful information from it. Micro computed tomography (micro-CT) is an important data source that creates opportunities for industry to better understand the behavior of fluids in reservoirs, and new tools are needed to gain more insights from that micro-CT data.”

With advances in imaging technology, researchers essentially can visualize the movement of fluids in underground reservoir rocks and other geologic materials.

The McClure team can then take this imaging data and compare it with simulations being performed on the OLCF’s Titan supercomputer, thus enabling the tracking of oil clusters underground in both space and time. During these simulations, the team found that “disconnected” oil, or oil that is not directly connected to a larger flowing oil reserve and often is thought to be immobile, actually does move and contribute to flow.

Because these advancements have enabled unprecedented detail of fluid flow in simulation, the team can now study fluid physics occurring in micrometer-sized grains of rock and then scale this to hundreds of meters at the reservoir scale.

The SCA symposium attracts researchers from the oil and gas industry, and McClure’s team collaborates with researchers in both academia and industry. McClure collaborated with Steffen Berg of Shell Global Solutions, Maja Ruecker of the Imperial College of London, Steffen Schlueter of the Helmholtz Centre for Environmental Research in Germany, and Ryan Armstrong of the School of Petroleum Engineering at the University of New South Wales in Australia.

McClure’s team also collaborated closely with OLCF computational scientist Mark Berrill to improve computational efficiency. Berrill helped the team run in situ data analysis for simulations on Titan, which uses both traditional CPUs and high-performance GPUs to achieve maximum performance. Because McClure’s team could run most of its calculations on Titan’s GPUs, Berrill enabled the CPUs to do data analysis while the simulation ran, reducing the need for extensive data transfer.

“In situ analysis allows us to track oil behavior in time,” McClure said. “Oil coalescence events happen on a really small timescale and large length scale, meaning you have to simulate a large amount of time steps. To do a macroscopic flow simulation, we have many of these events happening, and without in situ analysis, we would not be able to do these types of simulations.”

Armstrong noted the work has a significant effect on the oil and gas industry. “For enhanced oil recovery, we must understand how fluid flows through porous rock in the presence of water and other chemical additives,” he said. “HPC provides the opportunity for guiding what traditional experiments are necessary and under what protocols. These innovations could allow a company to reduce its experimental parameter space to only a few key enhanced oil recovery applications. With HPC and future computational capabilities, we will be able to increase the computational domain and move forward to a framework that spans all the multiscale physics from porous media flow.”

Oak Ridge National Laboratory is supported by the US Department of Energy’s Office of Science. The single largest supporter of basic research in the physical sciences in the United States, the Office of Science is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov.