Building on the success of the Titan-era CAAR project, OLCF staff continue to offer training and workshops for CAAR teams in the lead up to Summit.

Paper discusses OLCF efforts to transition to hybrid architectures, best practices for code portability

As far back as the 1990s, computational scientists and computer scientists alike saw the need to develop new methods for increasing the speed and efficiency of scientific discovery and porting scientific codes from one computer architecture to another.

Staff members at the US Department of Energy’s (DOE’s) Oak Ridge National Laboratory (ORNL) have innovated their way to solutions in performance portability and code development whenever a new disruptive technology challenges the status quo in high-performance computing.

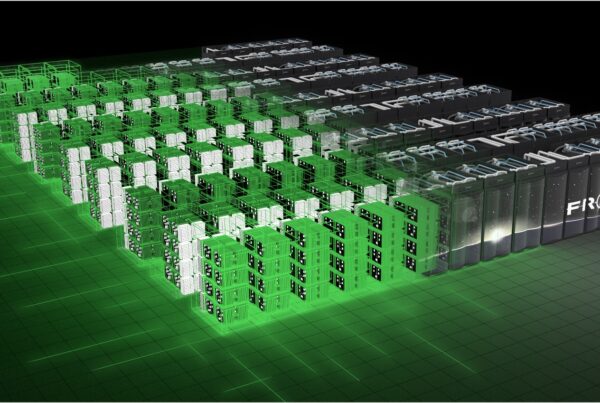

The Oak Ridge Leadership Computing Facility (OLCF), a DOE Office of Science User Facility located at ORNL, faced the most recent disruptive technology—the advent of GPUs being used to accelerate supercomputers—when it decided to build the Cray XK7 Titan supercomputer in 2010.

“Titan was high-risk, high-reward,” said OLCF computational scientist Wayne Joubert. He and other OLCF computational scientists knew that to start delivering science from day one, there would need to be a coordinated effort to get users and their codes modified to run effectively on Titan before the system was fully online.

As a result, OLCF staff worked with vendor partners Cray and NVIDIA to form the Center for Advanced Application Readiness (CAAR). To ensure that a wide variety of codes were prepared to run on Titan’s hybrid architecture, staff selected diverse codes that would represent scientific domains essential to DOE’s mission.

“We wanted to look at many types of codes to understand the patterns that needed to change and gauge the effort that refactoring process requires,” Joubert said. After going through the CAAR process and successfully launching Titan, OLCF computational scientists wrote a journal article describing their experiences with CAAR, the lessons learned, and best practices for users porting codes to hybrid architectures. The article was published in the August edition of Computers and Electrical Engineering.

The staff members learned that, above all, researchers who intend to continue working on high-performance computers likely will have to restructure their codes for hybrid computing architectures, and this dynamic process likely will continue well into the future.

Further, the CAAR project affirmed the need for users not just to port their codes to a single computing architecture, but rather to design them to be flexible enough to easily port to various computing architectures.

In the 1990s, computer scientists developed the Message Passing Interface (MPI), a standardized system for researchers to use parallel computers—computers that can simultaneously do many different calculations for a larger problem. Although this was a disruptive technology, in many cases researchers needed only to change a small fraction of the code in their applications.

Today, GPUs and other accelerators are forcing researchers to open their codes back up and make more wholesale changes. Many codes for research projects are still unable to fully exploit supercomputing hardware. As a result, the OLCF committed to another CAAR initiative in advance of the next-generation supercomputer, Summit, set to begin delivering science at the OLCF in 2018. You can find more information about the current CAAR project here.

“Many ideas in our paper are still part of the discussion about what has to be done for Summit,” Joubert said.

For more information, please visit: https://www.sciencedirect.com/science/article/pii/S0045790615001366

Oak Ridge National Laboratory is supported by the US Department of Energy’s Office of Science. The single largest supporter of basic research in the physical sciences in the United States, the Office of Science is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov.