Hackathons offer a unique experience for scientific computing teams from around the globe to gain intimate access to leading experts in high-performance computing, along with access to some of the supercomputers they represent.

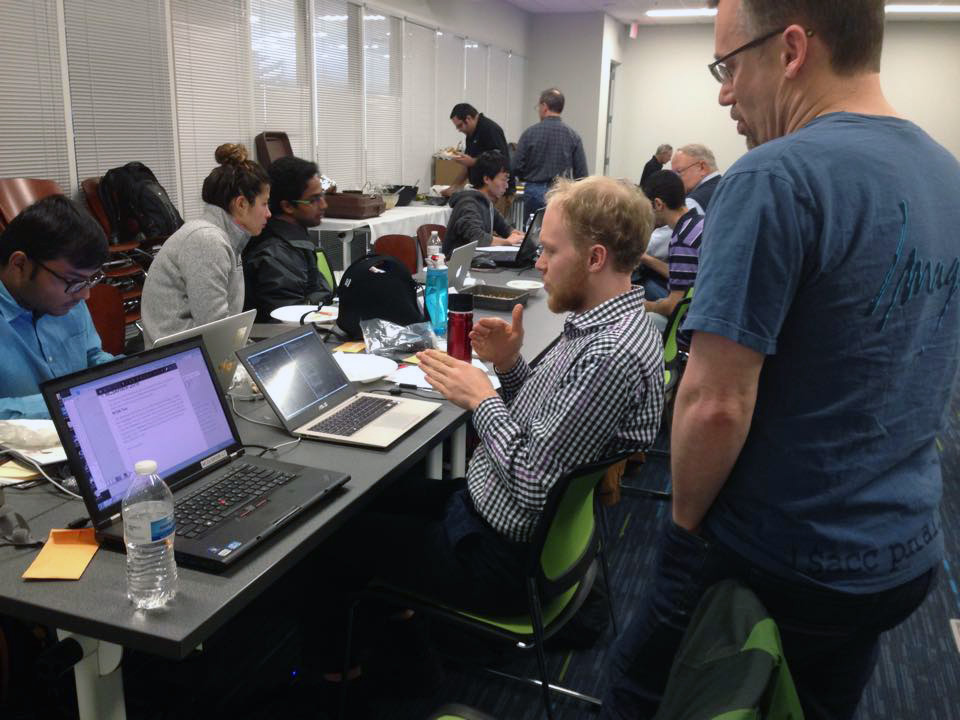

Programmers gather at NCSA for OpenACC GPU accelerated workshop

What good would a supercomputer be without its applications? As supercomputers inevitably scale to newer, more diversified architectures, so too must their applications.

Building on the lauded success of last year’s 2014 inaugural hackathon, organizers from the Oak Ridge Leadership Computing Facility (OLCF), the University of Illinois Coordinated Science Laboratory and National Center for Supercomputing Applications (NCSA), NVIDIA, and The Portland Group (PGI) gathered in April at NCSA on the university’s Urbana-Champaign campus for the next round of applications acceleration programming.

“The goal of the events is for mentors to help the teams prepare their next-generation applications for the next generation of heterogeneous supercomputers and for the teams to help mentors to gain insights on how to improve their tools and methods,” said Wen-mei Hwu, co-principal investigator for Blue Waters supercomputer.

The event’s approximately 40 attendees were composed of four science teams with important applications—SIMULOCEAN, Nek5000, VWM, and PowerGrid. Also present were mentors: individuals from NVIDIA, PGI, and Mentor Graphics as well as a group led by Hwu from the CUDA Center of Excellence at the University of Illinois. In addition to access to mentors, each team was given accounts on NCSA’s JYC test system and Blue Waters supercomputer; NVIDIA’s internal cluster; and Titan and Chester (a Cray XK7 supercomputer and a single cabinet Cray XK7, respectively) at the OLCF, a US Department of Energy (DOE) Office of Science User Facility at DOE’s Oak Ridge National Laboratory.

Teams spent the first four days learning various methods to port their codes to GPUs. During the midway point of each day, “scrum sessions” allowed programmers to take a break from coding, deliver progress reports, and receive feedback from the mentors and the other teams.

Teams focused primarily on five areas of learning:

- Programming methods for OpenACC

- Access procedures for GPU accelerated libraries, or library calls

- Code profiling techniques

- Program optimization

- Data transfer techniques

Having access to five different systems allowed participants to put those methods into practice and to analyze, compare, and contrast alternate methods of compiling to see which methods worked the best and on which systems they were most successful. At the end of the four days, each team came away with significant achievements.

“The summary of the successes of each team is that they now have a path forward,” said OLCF’s event organizer Fernanda Foertter. “Also, they learned OpenACC. In some cases, some of them had little to no experience with it.”

One team in particular made remarkable progress. The team working with PowerGrid—an advanced model for MRI reconstruction—used OpenACC for the first time and was able to solve its problem 70 times faster than it could have solved the problem using a workstation. Team members did so by introducing both GPU and parallel node implementations. In one of their runs, they were able to reconstruct 3,000 brain images with an advanced imaging model in just under 24 hours by using many of the Blue Waters GPU nodes simultaneously—a task that would have taken months without OpenACC.

“In the past we had to approximate our calculations because they were so computationally intensive,” said PowerGrid’s principal investigator Brad Sutton. “In certain situations these approximations have a negative impact on image quality; now we can achieve accurate solutions using OpenACC to maximize image quality and performance.”

PowerGrid team member Alex Cerjanic added, “The connections we made with our mentors and their organizations not only enabled us to reach our goals that week but also connected us to resources that we can use to continue moving our research forward.”

Another contributing factor to each of the teams’ successes was the PGI OpenACC compiler. Taking into account lessons learned from the previous hackathon, the latest compiler release delivers enhanced usability and performance and includes a number of features and fixes implemented as a direct result of feedback from the 2014 event.

On the last day, all four teams delivered presentations to the attendees, detailing their overall experiences and achievements. Foertter presented the final results of the event a week later in Chicago at the 2015 Cray User Group Conference.

The success of the OLCF’s inaugural hackathon last year has resonated well within the HPC community, and by all accounts the NCSA event carried it even further—but it is not going to stop there. April’s event marked the first of three hackathons scheduled in 2015; the next one will take place starting July 10 in Switzerland at the Swiss National Supercomputing Centre (CSCS).

Evidenced in the collaborative endeavors of the OLCF and the NCSA—in addition to the CSCS event right around the corner—hackathons are proving to be a powerful approach that demonstrates the benefits of strengthening relationships between centers with heterogeneous architectures. And undoubtedly those relationships will be even more beneficial in the future as programmers around the world continue to do their part in advancing the mission of science.

“Hackathons are an effective way for science and engineering teams to quickly migrate from traditional serial programming to many-core programming at scale,” said Blue Waters director Bill Kramer. “The intense sessions, with weeklong dedicated efforts by the teams, vendors, and experts, help to quickly enable teams to reengineer their codes for the future, something that could take months or more without hackathons. The progress of these four teams will enable them to do more and better research in the future.” —Jeremy Rumsey

Oak Ridge National Laboratory is supported by the US Department of Energy’s Office of Science. The single largest supporter of basic research in the physical sciences in the United States, the Office of Science is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov.