Experts, including ORNL’s James Hack, search for ways to build knowledge of the Earth system

James Hack interprets climate models. His knowledge as a climate simulation expert and director of the National Center for Computational Sciences made him an asset to a committee formed by the National Academy of Sciences that reported on ways to quickly improve climate models. Photo credit Jason Richards, Oak Ridge National Laboratory.

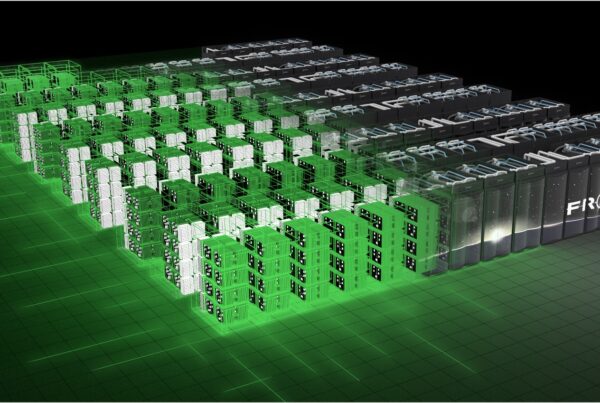

A committee formed by the National Academy of Sciences (NAS) and the National Research Council (NRC) has released a report recommending ways to advance climate modeling over the next two decades. Oak Ridge National Laboratory’s (ORNL’s) James Hack was a member of the 15-person committee. He was chosen because he is a former climate model developer and head of the Oak Ridge Climate Change Science Institute and director of the ORNL National Center for Computational Sciences (NCCS), which houses the OLCF and the Titan supercomputer.

“As director of a facility like [the NCCS], I am very much aware of what the computational environments are all about, how they’re evolving, where they’re going, and what kind of challenge that’s going to present in the model-development process,” says Hack on why he was chosen to help with the report. His knowledge of climate model development and the supercomputing resources used to run climate simulations made him an invaluable asset to the committee.

Climate change is happening and stakeholders want to know what the effects will be. The Intergovernmental Panel on Climate Change released the Fourth Assessment Report in 2007 with two key findings: (1) warming of the climate system is undeniable, and (2) most of the observed increase in global temperatures since the mid-20th century is likely due to the increase in anthropogenic greenhouse gas concentration. According to this assessment report, the effects of climate variability will sometimes be dramatic. Climate models played a vital role in arriving at these important conclusions and will do the same for the Fifth Assessment Report, expected in 2014. For future assessments, the panel will need more detailed and advanced models than those available in the past. Stakeholder communities recognize that the only way to do experiments on climate change effects is in a virtual environment, which means simulating the best representation of the climate system on high-performance computer systems.

Some of those stakeholder communities include the US Department of Energy, National Oceanic and Atmospheric Administration, National Science Foundation, National Aeronautics and Space Administration, and US intelligence community. These stakeholders asked the NRC, which is the operating arm of the NAS that has provided advice regarding science and technology questions under a congressional charter since 1863, for a report about developing a strategy for advancing climate modeling.

“The models are imperfect,” says Hack. “They’ll always be imperfect by definition, so we’re going to continue to improve them and that happens at a certain rate. Given the fact that this problem, if you go back and believe the Fourth International Assessment Report’s conclusion that climate variability is real, then right now everything we’re doing is making our bed for the future. The consequences of the things we do today may not be seen for 20 or 30 or 40 years. While that’s happening, life goes on. People build dams and they build power plants, they change the terrestrial landscape, and there’s an ever-growing demand on the planet for energy as the population grows.”

The first goal of the NAS report was to assess how climate modeling is conducted in the United States right now. The committee brought in experts to investigate the strengths and challenges of current climate modeling approaches, such as how useful they are to decision making, what observations are needed to support model development, and what potential exists for improving the current techniques.

A major problem is that while current climate models are good at predicting changes due to climate variability on a global scale, they are not as good at predicting what will happen on a regional basis. They may be able to predict an increased incidence of drought on a continental scale, but regional interests may want to know if the summer is going to bring a drought in a particular area. Current models are not detailed enough to give them this information.

“If you’re managing water resources in the western United States where it’s very arid, knowing if it’s going to be a wet season or a dry season is very important for how you manage that resource,” says Hack. “Those folks are saying that they need better information.”

Another problem is that current climate models do not extend far enough into the future. The stakeholder communities want to know what the likely trends in climate will be over a long period, such as many decades into the future. Current models are not skillful in making detailed predictions on those time scales.

“If you build infrastructure, say a nuclear power plant on a river, you want to know whether or not there’s going to be water in that river 50 years from now because this is an investment that has a very long lifetime,” Hack says.

Having identified key problems, the committee discussed what further developments in climate modeling will be needed to solve them.

Recommendations

The committee provided recommendations about what the national strategy should be over the next two decades to advance climate modeling quickly and in the areas in which advancement is most needed, namely knowing more specifically the regional ramifications of climate variability and projecting information farther into the future.

Evolve to a common software infrastructure. Computer software infrastructure is constantly changing. As director of the NCCS, Hack is familiar with this evolution as well as with the importance of adapting models that will work well with new technology. The rest of the committee understood this need as well, so they informed the stakeholders that it is vital to be mindful of how the software is changing and to develop a common software infrastructure for modeling.

Convene a national climate modeling forum. The committee suggested an annual US climate modeling forum to bring the nation’s modeling communities together with the users of climate data. Currently, climate modelers and data users generally learn about strides in their fields through journals and conferences. The result is that information on new simulation techniques disseminates slowly. The NAS report indicates that convening an annual forum would be more efficient because it would help inform the community of new developments, provide a venue for discussions of modeling priorities, and bring separate climate science and computer science communities together to design common modeling experiments.

Nurture a unified weather-climate modeling effort. The committee also suggested that operational weather forecasting centers and the climate modeling community collaborate. Weather and climate differ in that weather describes short-term atmospheric trends—up to about a month—while climate is statistical weather and an indicator of weather trends over a longer period. However, climate and weather both involve weather processes. Climate scientists run into problems because it takes a long time to collect observational data to test models due to the extended period over which climate variations take place. The recommendation is to make the most possible use of observations on weather time-scales to validate the physical formulations of climate models.

Develop a program for climate model interpreters. Climate-data users vary greatly in their backgrounds as well as their needs. The goal of this recommendation is to develop a program for training experts on climate models who can explain and adapt models to apply to people with varied interests—from farmers who want regional rain-pattern forecasts to international development programs that desire predictions about global patterns.—by Leah Moore