A High-Performance Communication Interface

for HPC and Data Centers

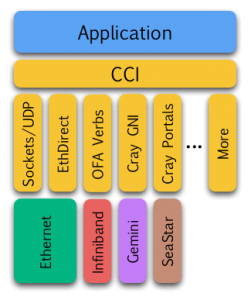

The CCI project is an open-source communication interface that aims to provide a simple and portable API, high performance, scalability for the largest deployments, and robustness in the presence of faults. It is developed and maintained by a partnership of research, academic, and industry members.

Targeted towards high performance computing (HPC) environments as well as large data centers, CCI can provide a common network abstraction layer (NAL) for persistent services as well as general interprocess communication. In HPC, MPI is the de facto standard for communication within a job. Persistent services such as distributed file systems, code coupling (e.g. a simulation sending output to an analysis application sending its output to a visualization process), health monitoring, debugging, and performance monitoring, however, exist outside of scheduler jobs or span multiple jobs. In these cases, these persistent services tend to use either BSD sockets for portability to avoid having to rewrite the applications for each new interconnect or they implement their own NAL which takes developer time and effort. CCI can simplify support for these persistent services by providing a common NAL which minimizes the maintenance and support for these services while providing improved performance (i.e. reduced latency and increased bandwidth) compared to Sockets.

Targeted towards high performance computing (HPC) environments as well as large data centers, CCI can provide a common network abstraction layer (NAL) for persistent services as well as general interprocess communication. In HPC, MPI is the de facto standard for communication within a job. Persistent services such as distributed file systems, code coupling (e.g. a simulation sending output to an analysis application sending its output to a visualization process), health monitoring, debugging, and performance monitoring, however, exist outside of scheduler jobs or span multiple jobs. In these cases, these persistent services tend to use either BSD sockets for portability to avoid having to rewrite the applications for each new interconnect or they implement their own NAL which takes developer time and effort. CCI can simplify support for these persistent services by providing a common NAL which minimizes the maintenance and support for these services while providing improved performance (i.e. reduced latency and increased bandwidth) compared to Sockets.

We are currently working on proof-of-concept implementations using Sockets (UDP), Cray Portals 3.3, OpenFabrics Verbs, Cray GNI, and IBM BG/P (Kittyhawk), as well as raw Ethernet. Visit CCI Forum for release information.

[tab: Concepts]CCI has a few key concepts outlined below.

Device

A device represents a network interface card (NIC) or host channel adapter (HCA) that connects the host and network. A device may provide multiple endpoints (typically one per core).

Endpoint

In CCI, an endpoint is the process’ virtual instance of a device. The endpoint is the container of all the communication resources needed by the process including queues and buffers including shared send and receive buffers. A single endpoint may communicate with any number of peers and provides a single completion queue regardless of the number of peers. CCI achieves better scalability on very large HPC and data center deployments since the endpoint manages buffers independent of how many peers it is communicating with.

CCI uses an event model in which an application may either poll or wait for the next event. Events include communication (e.g. send completions) as well as connection handling (e.g. incoming client connection requests). The application may return an event to CCI out of order when it no longer needs the event. CCI achieves better scalability in time versus Sockets since all events are managed by a single completion queue.

Connection

CCI uses connections to allow an application to choose the level of service that best fits its needs and to provide fault isolation should a peer stop responding. CCI does not, however, allocate buffers per peer which reduces scalability.

CCI offers choices of reliability and ordering. Some applications such as distributed filesystems need to know that data has arrived at the peer. A health monitoring application, on the other hand, may want to send data that has a very short lifetime and does not want to expend any efforts on retransmission. CCI can accommodate both.

CCI provides Unreliable-Unordered (UU), Reliable-Unordered (RU), and Reliable-Ordered (RO) connections as well as UU with send multicast and UU with receive multicast. Most RPC style applications do not require ordering and can take advantage of RU connections. When ordering is not required, CCI can take better advantage of multiple network paths, etc.

Communication

CCI has two modes of communication: active messages (AM) and remote memory access (RMA).

Loosely based on Berkeley’s Active Messages, CCI’s messages are small (typically MTU sized) messages. Unlike Berkeley’s AM, the message header does not include a handler address. Instead, the receiver’s CCI library will generate a receive event that includes a pointer to the data within the CCI library’s buffers. The application may inspect the data, copy it out if needed longer term, or even forward the data by passing it to the send function. By using events instead of handlers, the application does not block further communication progress.

When an application needs to move bulk data, CCI provides RMA. To use RMA, the application will explicitly register memory with CCI and receive a handle. The application can pass the handle to a peer using an active message and then perform a RMA Read or Write. The RMA may also include a Fence. RMA requires a reliable connection (ordered or unordered). An RMA may optionally include a remote completion message that will be delivered to the peer after the RMA completes. The completion message may be as large as a full active message.

[tab: Press & Publications]Publications

1. Scott Atchley, David Dillow, Galen Shipman, Patrick Geoffray, Jeffrey M. Squyres, George Bosilca and Ronald Minnich, “The Common Communication Interface (CCI)” in the 19th IEEE Symposium on High Performance Interconnects (HOTI), Santa Clara, CA, August 23-25, 2011. PDF .bib

Slides

1. Galen Shipman, “Common Communication Interface (CCI)”, 19th IEEE Symposium on High Performance Interconnects (HOTI), Santa Clara, CA, August 23-25, 2011. PDF

2. “CCI – An HPC Perspective”, September, 2011. PDF

3. “CCI – A Data Center Perspective”, September, 2011. PDF

4. “The Common Communication Interface (CCI)”, Ethernet Summit, San Jose, CA, Feb. 21-23, 2012. PDF

Talks

1. Galen Shipman and Patrick Geoffray, The Common Communication Interface, presented at OpenFabrics Workshop, 2012

Miscellaneous

1. Cisco HPC Blog featured a two-part discussion of network APIs: Network APIs and CCI.

[tab: Developers]If you are interested in contributing to CCI, several options are open to you. You can port applications to CCI in order to provide feedback, contribute code, perform testing, or write documentation.

Draft API Document

This is a draft API and is subject to change. The connection establishment handling, in particular, is under-going some simplification.

Draft Routing Design

To enable communication between disjoint networks including wide-area networks, we are working on enabling routing for CCI connections.

![]() CCI Routing Design

CCI Routing Design

Contributor Agreements

Please see attached the CCI Contributor License Agreement, one for corporations and the other for individuals. These are based on the MPICH CLAs which are based on the Apache CLAs so they should be known quantities to most organizations. For all those participating in CCI, please complete review of the CLA and return an the executed CLA to [email protected].

[tab: Partners] The following organizations are participating in CCI.